mirror of

https://github.com/9001/copyparty.git

synced 2025-10-24 16:43:55 +00:00

Compare commits

173 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

b69aace8d8 | ||

|

|

79097bb43c | ||

|

|

806fac1742 | ||

|

|

4f97d7cf8d | ||

|

|

42acc457af | ||

|

|

c02920607f | ||

|

|

452885c271 | ||

|

|

5c242a07b6 | ||

|

|

088899d59f | ||

|

|

1faff2a37e | ||

|

|

23c8d3d045 | ||

|

|

a033388d2b | ||

|

|

82fe45ac56 | ||

|

|

bcb7fcda6b | ||

|

|

726a98100b | ||

|

|

2f021a0c2b | ||

|

|

eb05cb6c6e | ||

|

|

7530af95da | ||

|

|

8399e95bda | ||

|

|

3b4dfe326f | ||

|

|

2e787a254e | ||

|

|

f888bed1a6 | ||

|

|

d865e9f35a | ||

|

|

fc7fe70f66 | ||

|

|

5aff39d2b2 | ||

|

|

d1be37a04a | ||

|

|

b0fd8bf7d4 | ||

|

|

b9cf8f3973 | ||

|

|

4588f11613 | ||

|

|

1a618c3c97 | ||

|

|

d500a51d97 | ||

|

|

734e9d3874 | ||

|

|

bd5cfc2f1b | ||

|

|

89f88ee78c | ||

|

|

b2ae14695a | ||

|

|

19d86b44d9 | ||

|

|

85be62e38b | ||

|

|

80f3d90200 | ||

|

|

0249fa6e75 | ||

|

|

2d0696e048 | ||

|

|

ff32ec515e | ||

|

|

a6935b0293 | ||

|

|

63eb08ba9f | ||

|

|

e5b67d2b3a | ||

|

|

9e10af6885 | ||

|

|

42bc9115d2 | ||

|

|

0a569ce413 | ||

|

|

9a16639a61 | ||

|

|

57953c68c6 | ||

|

|

088d08963f | ||

|

|

7bc8196821 | ||

|

|

7715299dd3 | ||

|

|

b8ac9b7994 | ||

|

|

98e7d8f728 | ||

|

|

e7fd871ffe | ||

|

|

14aab62f32 | ||

|

|

cb81fe962c | ||

|

|

fc970d2dea | ||

|

|

b0e203d1f9 | ||

|

|

37cef05b19 | ||

|

|

5886a42901 | ||

|

|

2fd99f807d | ||

|

|

3d4cbd7d10 | ||

|

|

f10d03c238 | ||

|

|

f9a66ffb0e | ||

|

|

777a50063d | ||

|

|

0bb9154747 | ||

|

|

30c3f45072 | ||

|

|

0d5ca67f32 | ||

|

|

4a8bf6aebd | ||

|

|

b11db090d8 | ||

|

|

189391fccd | ||

|

|

86d4c43909 | ||

|

|

5994f40982 | ||

|

|

076d32dee5 | ||

|

|

16c8e38ecd | ||

|

|

eacbcda8e5 | ||

|

|

59be76cd44 | ||

|

|

5bb0e7e8b3 | ||

|

|

b78d207121 | ||

|

|

0fcbcdd08c | ||

|

|

ed6c683922 | ||

|

|

9fe1edb02b | ||

|

|

fb3811a708 | ||

|

|

18f8658eec | ||

|

|

3ead4676b0 | ||

|

|

d30001d23d | ||

|

|

06bbf0d656 | ||

|

|

6ddd952e04 | ||

|

|

027ad0c3ee | ||

|

|

3abad2b87b | ||

|

|

32a1c7c5d5 | ||

|

|

f06e165bd4 | ||

|

|

1c843b24f7 | ||

|

|

2ace9ed380 | ||

|

|

5f30c0ae03 | ||

|

|

ef60adf7e2 | ||

|

|

7354b462e8 | ||

|

|

da904d6be8 | ||

|

|

c5fbbbbb5c | ||

|

|

5010387d8a | ||

|

|

f00c54a7fb | ||

|

|

9f52c169d0 | ||

|

|

bf18339404 | ||

|

|

2ad12b074b | ||

|

|

a6788ffe8d | ||

|

|

0e884df486 | ||

|

|

ef1c55286f | ||

|

|

abc0424c26 | ||

|

|

44e5c82e6d | ||

|

|

5849c446ed | ||

|

|

12b7317831 | ||

|

|

fe323f59af | ||

|

|

a00e56f219 | ||

|

|

1a7852794f | ||

|

|

22b1373a57 | ||

|

|

17d78b1469 | ||

|

|

4d8b32b249 | ||

|

|

b65bea2550 | ||

|

|

0b52ccd200 | ||

|

|

3006a07059 | ||

|

|

801dbc7a9a | ||

|

|

4f4e895fb7 | ||

|

|

cc57c3b655 | ||

|

|

ca6ec9c5c7 | ||

|

|

633b1f0a78 | ||

|

|

6136b9bf9c | ||

|

|

524a3ba566 | ||

|

|

58580320f9 | ||

|

|

759b0a994d | ||

|

|

d2800473e4 | ||

|

|

f5b1a2065e | ||

|

|

5e62532295 | ||

|

|

c1bee96c40 | ||

|

|

f273253a2b | ||

|

|

012bbcf770 | ||

|

|

b54cb47b2e | ||

|

|

1b15f43745 | ||

|

|

96771bf1bd | ||

|

|

580078bddb | ||

|

|

c5c7080ec6 | ||

|

|

408339b51d | ||

|

|

02e3d44998 | ||

|

|

156f13ded1 | ||

|

|

d288467cb7 | ||

|

|

21662c9f3f | ||

|

|

9149fe6cdd | ||

|

|

9a146192b7 | ||

|

|

3a9d3b7b61 | ||

|

|

f03f0973ab | ||

|

|

7ec0881e8c | ||

|

|

59e1ab42ff | ||

|

|

722216b901 | ||

|

|

bd8f3dc368 | ||

|

|

33cd94a141 | ||

|

|

053ac74734 | ||

|

|

cced99fafa | ||

|

|

a009ff53f7 | ||

|

|

ca16c4108d | ||

|

|

d1b6c67dc3 | ||

|

|

a61f8133d5 | ||

|

|

38d797a544 | ||

|

|

16c1877f50 | ||

|

|

da5f15a778 | ||

|

|

396c64ecf7 | ||

|

|

252c3a7985 | ||

|

|

a3ecbf0ae7 | ||

|

|

314327d8f2 | ||

|

|

bfacd06929 | ||

|

|

4f5e8f8cf5 | ||

|

|

1fbb4c09cc | ||

|

|

b332e1992b | ||

|

|

5955940b82 |

40

.github/ISSUE_TEMPLATE/bug_report.md

vendored

Normal file

40

.github/ISSUE_TEMPLATE/bug_report.md

vendored

Normal file

@@ -0,0 +1,40 @@

|

||||

---

|

||||

name: Bug report

|

||||

about: Create a report to help us improve

|

||||

title: ''

|

||||

labels: bug

|

||||

assignees: '9001'

|

||||

|

||||

---

|

||||

|

||||

NOTE:

|

||||

all of the below are optional, consider them as inspiration, delete and rewrite at will, thx md

|

||||

|

||||

|

||||

**Describe the bug**

|

||||

a description of what the bug is

|

||||

|

||||

**To Reproduce**

|

||||

List of steps to reproduce the issue, or, if it's hard to reproduce, then at least a detailed explanation of what you did to run into it

|

||||

|

||||

**Expected behavior**

|

||||

a description of what you expected to happen

|

||||

|

||||

**Screenshots**

|

||||

if applicable, add screenshots to help explain your problem, such as the kickass crashpage :^)

|

||||

|

||||

**Server details**

|

||||

if the issue is possibly on the server-side, then mention some of the following:

|

||||

* server OS / version:

|

||||

* python version:

|

||||

* copyparty arguments:

|

||||

* filesystem (`lsblk -f` on linux):

|

||||

|

||||

**Client details**

|

||||

if the issue is possibly on the client-side, then mention some of the following:

|

||||

* the device type and model:

|

||||

* OS version:

|

||||

* browser version:

|

||||

|

||||

**Additional context**

|

||||

any other context about the problem here

|

||||

22

.github/ISSUE_TEMPLATE/feature_request.md

vendored

Normal file

22

.github/ISSUE_TEMPLATE/feature_request.md

vendored

Normal file

@@ -0,0 +1,22 @@

|

||||

---

|

||||

name: Feature request

|

||||

about: Suggest an idea for this project

|

||||

title: ''

|

||||

labels: enhancement

|

||||

assignees: '9001'

|

||||

|

||||

---

|

||||

|

||||

all of the below are optional, consider them as inspiration, delete and rewrite at will

|

||||

|

||||

**is your feature request related to a problem? Please describe.**

|

||||

a description of what the problem is, for example, `I'm always frustrated when [...]` or `Why is it not possible to [...]`

|

||||

|

||||

**Describe the idea / solution you'd like**

|

||||

a description of what you want to happen

|

||||

|

||||

**Describe any alternatives you've considered**

|

||||

a description of any alternative solutions or features you've considered

|

||||

|

||||

**Additional context**

|

||||

add any other context or screenshots about the feature request here

|

||||

10

.github/ISSUE_TEMPLATE/something-else.md

vendored

Normal file

10

.github/ISSUE_TEMPLATE/something-else.md

vendored

Normal file

@@ -0,0 +1,10 @@

|

||||

---

|

||||

name: Something else

|

||||

about: "┐(゚∀゚)┌"

|

||||

title: ''

|

||||

labels: ''

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

|

||||

7

.github/branch-rename.md

vendored

Normal file

7

.github/branch-rename.md

vendored

Normal file

@@ -0,0 +1,7 @@

|

||||

modernize your local checkout of the repo like so,

|

||||

```sh

|

||||

git branch -m master hovudstraum

|

||||

git fetch origin

|

||||

git branch -u origin/hovudstraum hovudstraum

|

||||

git remote set-head origin -a

|

||||

```

|

||||

4

.gitignore

vendored

4

.gitignore

vendored

@@ -20,3 +20,7 @@ sfx/

|

||||

# derived

|

||||

copyparty/web/deps/

|

||||

srv/

|

||||

|

||||

# state/logs

|

||||

up.*.txt

|

||||

.hist/

|

||||

2

.vscode/launch.json

vendored

2

.vscode/launch.json

vendored

@@ -17,7 +17,7 @@

|

||||

"-mtp",

|

||||

".bpm=f,bin/mtag/audio-bpm.py",

|

||||

"-aed:wark",

|

||||

"-vsrv::r:aed:cnodupe",

|

||||

"-vsrv::r:rw,ed:c,dupe",

|

||||

"-vdist:dist:r"

|

||||

]

|

||||

},

|

||||

|

||||

1

.vscode/settings.json

vendored

1

.vscode/settings.json

vendored

@@ -55,4 +55,5 @@

|

||||

"py27"

|

||||

],

|

||||

"python.linting.enabled": true,

|

||||

"python.pythonPath": "/usr/bin/python3"

|

||||

}

|

||||

24

CODE_OF_CONDUCT.md

Normal file

24

CODE_OF_CONDUCT.md

Normal file

@@ -0,0 +1,24 @@

|

||||

in the words of Abraham Lincoln:

|

||||

|

||||

> Be excellent to each other... and... PARTY ON, DUDES!

|

||||

|

||||

more specifically I'll paraphrase some examples from a german automotive corporation as they cover all the bases without being too wordy

|

||||

|

||||

## Examples of unacceptable behavior

|

||||

* intimidation, harassment, trolling

|

||||

* insulting, derogatory, harmful or prejudicial comments

|

||||

* posting private information without permission

|

||||

* political or personal attacks

|

||||

|

||||

## Examples of expected behavior

|

||||

* being nice, friendly, welcoming, inclusive, mindful and empathetic

|

||||

* acting considerate, modest, respectful

|

||||

* using polite and inclusive language

|

||||

* criticize constructively and accept constructive criticism

|

||||

* respect different points of view

|

||||

|

||||

## finally and even more specifically,

|

||||

* parse opinions and feedback objectively without prejudice

|

||||

* it's the message that matters, not who said it

|

||||

|

||||

aaand that's how you say `be nice` in a way that fills half a floppy w

|

||||

3

CONTRIBUTING.md

Normal file

3

CONTRIBUTING.md

Normal file

@@ -0,0 +1,3 @@

|

||||

* do something cool

|

||||

|

||||

really tho, send a PR or an issue or whatever, all appreciated, anything goes, just behave aight

|

||||

353

README.md

353

README.md

@@ -8,52 +8,63 @@

|

||||

|

||||

turn your phone or raspi into a portable file server with resumable uploads/downloads using *any* web browser

|

||||

|

||||

* server runs on anything with `py2.7` or `py3.3+`

|

||||

* server only needs `py2.7` or `py3.3+`, all dependencies optional

|

||||

* browse/upload with IE4 / netscape4.0 on win3.11 (heh)

|

||||

* *resumable* uploads need `firefox 34+` / `chrome 41+` / `safari 7+` for full speed

|

||||

* code standard: `black`

|

||||

|

||||

📷 **screenshots:** [browser](#the-browser) // [upload](#uploading) // [thumbnails](#thumbnails) // [md-viewer](#markdown-viewer) // [search](#searching) // [fsearch](#file-search) // [zip-DL](#zip-downloads) // [ie4](#browser-support)

|

||||

📷 **screenshots:** [browser](#the-browser) // [upload](#uploading) // [unpost](#unpost) // [thumbnails](#thumbnails) // [search](#searching) // [fsearch](#file-search) // [zip-DL](#zip-downloads) // [md-viewer](#markdown-viewer) // [ie4](#browser-support)

|

||||

|

||||

|

||||

## readme toc

|

||||

|

||||

* top

|

||||

* [quickstart](#quickstart) - download [copyparty-sfx.py](https://github.com/9001/copyparty/releases/latest/download/copyparty-sfx.py) and you're all set!

|

||||

* [quickstart](#quickstart) - download **[copyparty-sfx.py](https://github.com/9001/copyparty/releases/latest/download/copyparty-sfx.py)** and you're all set!

|

||||

* [on servers](#on-servers) - you may also want these, especially on servers

|

||||

* [on debian](#on-debian) - recommended additional steps on debian

|

||||

* [notes](#notes) - general notes

|

||||

* [status](#status) - summary: all planned features work! now please enjoy the bloatening

|

||||

* [status](#status) - feature summary

|

||||

* [testimonials](#testimonials) - small collection of user feedback

|

||||

* [motivations](#motivations) - project goals / philosophy

|

||||

* [future plans](#future-plans) - some improvement ideas

|

||||

* [bugs](#bugs)

|

||||

* [general bugs](#general-bugs)

|

||||

* [not my bugs](#not-my-bugs)

|

||||

* [FAQ](#FAQ) - "frequently" asked questions

|

||||

* [accounts and volumes](#accounts-and-volumes) - per-folder, per-user permissions

|

||||

* [the browser](#the-browser) - accessing a copyparty server using a web-browser

|

||||

* [tabs](#tabs) - the main tabs in the ui

|

||||

* [hotkeys](#hotkeys) - the browser has the following hotkeys (always qwerty)

|

||||

* [hotkeys](#hotkeys) - the browser has the following hotkeys

|

||||

* [navpane](#navpane) - switching between breadcrumbs or navpane

|

||||

* [thumbnails](#thumbnails) - press `g` to toggle image/video thumbnails instead of the file listing

|

||||

* [thumbnails](#thumbnails) - press `g` to toggle grid-view instead of the file listing

|

||||

* [zip downloads](#zip-downloads) - download folders (or file selections) as `zip` or `tar` files

|

||||

* [uploading](#uploading) - web-browsers can upload using `bup` and `up2k`

|

||||

* [file-search](#file-search) - drop files/folders into up2k to see if they exist on the server

|

||||

* [uploading](#uploading) - drag files/folders into the web-browser to upload

|

||||

* [file-search](#file-search) - dropping files into the browser also lets you see if they exist on the server

|

||||

* [unpost](#unpost) - undo/delete accidental uploads

|

||||

* [file manager](#file-manager) - cut/paste, rename, and delete files/folders (if you have permission)

|

||||

* [batch rename](#batch-rename) - select some files and press F2 to bring up the rename UI

|

||||

* [batch rename](#batch-rename) - select some files and press `F2` to bring up the rename UI

|

||||

* [markdown viewer](#markdown-viewer) - and there are *two* editors

|

||||

* [other tricks](#other-tricks)

|

||||

* [searching](#searching) - search by size, date, path/name, mp3-tags, ...

|

||||

* [server config](#server-config)

|

||||

* [file indexing](#file-indexing)

|

||||

* [upload rules](#upload-rules) - set upload rules using volume flags, some examples

|

||||

* [upload rules](#upload-rules) - set upload rules using volume flags

|

||||

* [compress uploads](#compress-uploads) - files can be autocompressed on upload

|

||||

* [database location](#database-location) - can be stored in-volume (default) or elsewhere

|

||||

* [database location](#database-location) - in-volume (`.hist/up2k.db`, default) or somewhere else

|

||||

* [metadata from audio files](#metadata-from-audio-files) - set `-e2t` to index tags on upload

|

||||

* [file parser plugins](#file-parser-plugins) - provide custom parsers to index additional tags

|

||||

* [upload events](#upload-events) - trigger a script/program on each upload

|

||||

* [complete examples](#complete-examples)

|

||||

* [browser support](#browser-support) - TLDR: yes

|

||||

* [client examples](#client-examples) - interact with copyparty using non-browser clients

|

||||

* [up2k](#up2k) - quick outline of the up2k protocol, see [uploading](#uploading) for the web-client

|

||||

* [performance](#performance) - defaults are good for most cases

|

||||

* [why chunk-hashes](#why-chunk-hashes) - a single sha512 would be better, right?

|

||||

* [performance](#performance) - defaults are usually fine - expect `8 GiB/s` download, `1 GiB/s` upload

|

||||

* [security](#security) - some notes on hardening

|

||||

* [gotchas](#gotchas) - behavior that might be unexpected

|

||||

* [recovering from crashes](#recovering-from-crashes)

|

||||

* [client crashes](#client-crashes)

|

||||

* [frefox wsod](#frefox-wsod) - firefox 87 can crash during uploads

|

||||

* [dependencies](#dependencies) - mandatory deps

|

||||

* [optional dependencies](#optional-dependencies) - install these to enable bonus features

|

||||

* [install recommended deps](#install-recommended-deps)

|

||||

@@ -71,28 +82,31 @@ turn your phone or raspi into a portable file server with resumable uploads/down

|

||||

|

||||

## quickstart

|

||||

|

||||

download [copyparty-sfx.py](https://github.com/9001/copyparty/releases/latest/download/copyparty-sfx.py) and you're all set!

|

||||

download **[copyparty-sfx.py](https://github.com/9001/copyparty/releases/latest/download/copyparty-sfx.py)** and you're all set!

|

||||

|

||||

running the sfx without arguments (for example doubleclicking it on Windows) will give everyone full access to the current folder; see `-h` for help if you want [accounts and volumes](#accounts-and-volumes) etc

|

||||

running the sfx without arguments (for example doubleclicking it on Windows) will give everyone read/write access to the current folder; see `-h` for help if you want [accounts and volumes](#accounts-and-volumes) etc

|

||||

|

||||

some recommended options:

|

||||

* `-e2dsa` enables general file indexing, see [search configuration](#search-configuration)

|

||||

* `-e2dsa` enables general [file indexing](#file-indexing)

|

||||

* `-e2ts` enables audio metadata indexing (needs either FFprobe or Mutagen), see [optional dependencies](#optional-dependencies)

|

||||

* `-v /mnt/music:/music:r:rw,foo -a foo:bar` shares `/mnt/music` as `/music`, `r`eadable by anyone, and read-write for user `foo`, password `bar`

|

||||

* replace `:r:rw,foo` with `:r,foo` to only make the folder readable by `foo` and nobody else

|

||||

* see [accounts and volumes](#accounts-and-volumes) for the syntax and other access levels (`r`ead, `w`rite, `m`ove, `d`elete)

|

||||

* see [accounts and volumes](#accounts-and-volumes) for the syntax and other permissions (`r`ead, `w`rite, `m`ove, `d`elete, `g`et)

|

||||

* `--ls '**,*,ln,p,r'` to crash on startup if any of the volumes contain a symlink which point outside the volume, as that could give users unintended access

|

||||

|

||||

|

||||

### on servers

|

||||

|

||||

you may also want these, especially on servers:

|

||||

|

||||

* [contrib/systemd/copyparty.service](contrib/systemd/copyparty.service) to run copyparty as a systemd service

|

||||

* [contrib/systemd/prisonparty.service](contrib/systemd/prisonparty.service) to run it in a chroot (for extra security)

|

||||

* [contrib/nginx/copyparty.conf](contrib/nginx/copyparty.conf) to reverse-proxy behind nginx (for better https)

|

||||

|

||||

|

||||

### on debian

|

||||

|

||||

recommended additional steps on debian

|

||||

|

||||

enable audio metadata and thumbnails (from images and videos):

|

||||

recommended additional steps on debian which enable audio metadata and thumbnails (from images and videos):

|

||||

|

||||

* as root, run the following:

|

||||

`apt install python3 python3-pip python3-dev ffmpeg`

|

||||

@@ -114,12 +128,12 @@ browser-specific:

|

||||

* Android-Chrome: increase "parallel uploads" for higher speed (android bug)

|

||||

* Android-Firefox: takes a while to select files (their fix for ☝️)

|

||||

* Desktop-Firefox: ~~may use gigabytes of RAM if your files are massive~~ *seems to be OK now*

|

||||

* Desktop-Firefox: may stop you from deleting folders you've uploaded until you visit `about:memory` and click `Minimize memory usage`

|

||||

* Desktop-Firefox: may stop you from deleting files you've uploaded until you visit `about:memory` and click `Minimize memory usage`

|

||||

|

||||

|

||||

## status

|

||||

|

||||

summary: all planned features work! now please enjoy the bloatening

|

||||

feature summary

|

||||

|

||||

* backend stuff

|

||||

* ☑ sanic multipart parser

|

||||

@@ -137,7 +151,7 @@ summary: all planned features work! now please enjoy the bloatening

|

||||

* ☑ [folders as zip / tar files](#zip-downloads)

|

||||

* ☑ FUSE client (read-only)

|

||||

* browser

|

||||

* ☑ navpane (directory tree sidebar)

|

||||

* ☑ [navpane](#navpane) (directory tree sidebar)

|

||||

* ☑ file manager (cut/paste, delete, [batch-rename](#batch-rename))

|

||||

* ☑ audio player (with OS media controls)

|

||||

* ☑ image gallery with webm player

|

||||

@@ -163,17 +177,51 @@ small collection of user feedback

|

||||

`good enough`, `surprisingly correct`, `certified good software`, `just works`, `why`

|

||||

|

||||

|

||||

# motivations

|

||||

|

||||

project goals / philosophy

|

||||

|

||||

* inverse linux philosophy -- do all the things, and do an *okay* job

|

||||

* quick drop-in service to get a lot of features in a pinch

|

||||

* there are probably [better alternatives](https://github.com/awesome-selfhosted/awesome-selfhosted) if you have specific/long-term needs

|

||||

* run anywhere, support everything

|

||||

* as many web-browsers and python versions as possible

|

||||

* every browser should at least be able to browse, download, upload files

|

||||

* be a good emergency solution for transferring stuff between ancient boxes

|

||||

* minimal dependencies

|

||||

* but optional dependencies adding bonus-features are ok

|

||||

* everything being plaintext makes it possible to proofread for malicious code

|

||||

* no preparations / setup necessary, just run the sfx (which is also plaintext)

|

||||

* adaptable, malleable, hackable

|

||||

* no build steps; modify the js/python without needing node.js or anything like that

|

||||

|

||||

|

||||

## future plans

|

||||

|

||||

some improvement ideas

|

||||

|

||||

* the JS is a mess -- a preact rewrite would be nice

|

||||

* preferably without build dependencies like webpack/babel/node.js, maybe a python thing to assemble js files into main.js

|

||||

* good excuse to look at using virtual lists (browsers start to struggle when folders contain over 5000 files)

|

||||

* the UX is a mess -- a proper design would be nice

|

||||

* very organic (much like the python/js), everything was an afterthought

|

||||

* true for both the layout and the visual flair

|

||||

* something like the tron board-room ui (or most other hollywood ones, like ironman) would be :100:

|

||||

* some of the python files are way too big

|

||||

* `up2k.py` ended up doing all the file indexing / db management

|

||||

* `httpcli.py` should be separated into modules in general

|

||||

|

||||

|

||||

# bugs

|

||||

|

||||

* Windows: python 3.7 and older cannot read tags with FFprobe, so use Mutagen or upgrade

|

||||

* Windows: python 2.7 cannot index non-ascii filenames with `-e2d`

|

||||

* Windows: python 2.7 cannot handle filenames with mojibake

|

||||

* `--th-ff-jpg` may fix video thumbnails on some FFmpeg versions

|

||||

* `--th-ff-jpg` may fix video thumbnails on some FFmpeg versions (macos, some linux)

|

||||

|

||||

## general bugs

|

||||

|

||||

* all volumes must exist / be available on startup; up2k (mtp especially) gets funky otherwise

|

||||

* cannot mount something at `/d1/d2/d3` unless `d2` exists inside `d1`

|

||||

* probably more, pls let me know

|

||||

|

||||

## not my bugs

|

||||

@@ -188,21 +236,34 @@ small collection of user feedback

|

||||

* use `--hist` or the `hist` volflag (`-v [...]:c,hist=/tmp/foo`) to place the db inside the vm instead

|

||||

|

||||

|

||||

# FAQ

|

||||

|

||||

"frequently" asked questions

|

||||

|

||||

* is it possible to block read-access to folders unless you know the exact URL for a particular file inside?

|

||||

* yes, using the [`g` permission](#accounts-and-volumes), see the examples there

|

||||

|

||||

* can I make copyparty download a file to my server if I give it a URL?

|

||||

* not officially, but there is a [terrible hack](https://github.com/9001/copyparty/blob/hovudstraum/bin/mtag/wget.py) which makes it possible

|

||||

|

||||

|

||||

# accounts and volumes

|

||||

|

||||

per-folder, per-user permissions

|

||||

* `-a usr:pwd` adds account `usr` with password `pwd`

|

||||

* `-v .::r` adds current-folder `.` as the webroot, `r`eadable by anyone

|

||||

* the syntax is `-v src:dst:perm:perm:...` so local-path, url-path, and one or more permissions to set

|

||||

* when granting permissions to an account, the names are comma-separated: `-v .::r,usr1,usr2:rw,usr3,usr4`

|

||||

* granting the same permissions to multiple accounts:

|

||||

`-v .::r,usr1,usr2:rw,usr3,usr4` = usr1/2 read-only, 3/4 read-write

|

||||

|

||||

permissions:

|

||||

* `r` (read): browse folder contents, download files, download as zip/tar

|

||||

* `w` (write): upload files, move files *into* folder

|

||||

* `m` (move): move files/folders *from* folder

|

||||

* `w` (write): upload files, move files *into* this folder

|

||||

* `m` (move): move files/folders *from* this folder

|

||||

* `d` (delete): delete files/folders

|

||||

* `g` (get): only download files, cannot see folder contents or zip/tar

|

||||

|

||||

example:

|

||||

examples:

|

||||

* add accounts named u1, u2, u3 with passwords p1, p2, p3: `-a u1:p1 -a u2:p2 -a u3:p3`

|

||||

* make folder `/srv` the root of the filesystem, read-only by anyone: `-v /srv::r`

|

||||

* make folder `/mnt/music` available at `/music`, read-only for u1 and u2, read-write for u3: `-v /mnt/music:music:r,u1,u2:rw,u3`

|

||||

@@ -211,6 +272,10 @@ example:

|

||||

* unauthorized users accessing the webroot can see that the `inc` folder exists, but cannot open it

|

||||

* `u1` can open the `inc` folder, but cannot see the contents, only upload new files to it

|

||||

* `u2` can browse it and move files *from* `/inc` into any folder where `u2` has write-access

|

||||

* make folder `/mnt/ss` available at `/i`, read-write for u1, get-only for everyone else, and enable accesskeys: `-v /mnt/ss:i:rw,u1:g:c,fk=4`

|

||||

* `c,fk=4` sets the `fk` volume-flag to 4, meaning each file gets a 4-character accesskey

|

||||

* `u1` can upload files, browse the folder, and see the generated accesskeys

|

||||

* other users cannot browse the folder, but can access the files if they have the full file URL with the accesskey

|

||||

|

||||

|

||||

# the browser

|

||||

@@ -235,15 +300,15 @@ the main tabs in the ui

|

||||

|

||||

## hotkeys

|

||||

|

||||

the browser has the following hotkeys (always qwerty)

|

||||

* `B` toggle breadcrumbs / navpane

|

||||

the browser has the following hotkeys (always qwerty)

|

||||

* `B` toggle breadcrumbs / [navpane](#navpane)

|

||||

* `I/K` prev/next folder

|

||||

* `M` parent folder (or unexpand current)

|

||||

* `G` toggle list / grid view

|

||||

* `G` toggle list / [grid view](#thumbnails)

|

||||

* `T` toggle thumbnails / icons

|

||||

* `ctrl-X` cut selected files/folders

|

||||

* `ctrl-V` paste

|

||||

* `F2` rename selected file/folder

|

||||

* `F2` [rename](#batch-rename) selected file/folder

|

||||

* when a file/folder is selected (in not-grid-view):

|

||||

* `Up/Down` move cursor

|

||||

* shift+`Up/Down` select and move cursor

|

||||

@@ -270,7 +335,7 @@ the browser has the following hotkeys (always qwerty)

|

||||

* `M` mute

|

||||

* when the navpane is open:

|

||||

* `A/D` adjust tree width

|

||||

* in the grid view:

|

||||

* in the [grid view](#thumbnails):

|

||||

* `S` toggle multiselect

|

||||

* shift+`A/D` zoom

|

||||

* in the markdown editor:

|

||||

@@ -288,12 +353,14 @@ switching between breadcrumbs or navpane

|

||||

|

||||

click the `🌲` or pressing the `B` hotkey to toggle between breadcrumbs path (default), or a navpane (tree-browser sidebar thing)

|

||||

|

||||

click `[-]` and `[+]` (or hotkeys `A`/`D`) to adjust the size, and the `[a]` toggles if the tree should widen dynamically as you go deeper or stay fixed-size

|

||||

* `[-]` and `[+]` (or hotkeys `A`/`D`) adjust the size

|

||||

* `[v]` jumps to the currently open folder

|

||||

* `[a]` toggles automatic widening as you go deeper

|

||||

|

||||

|

||||

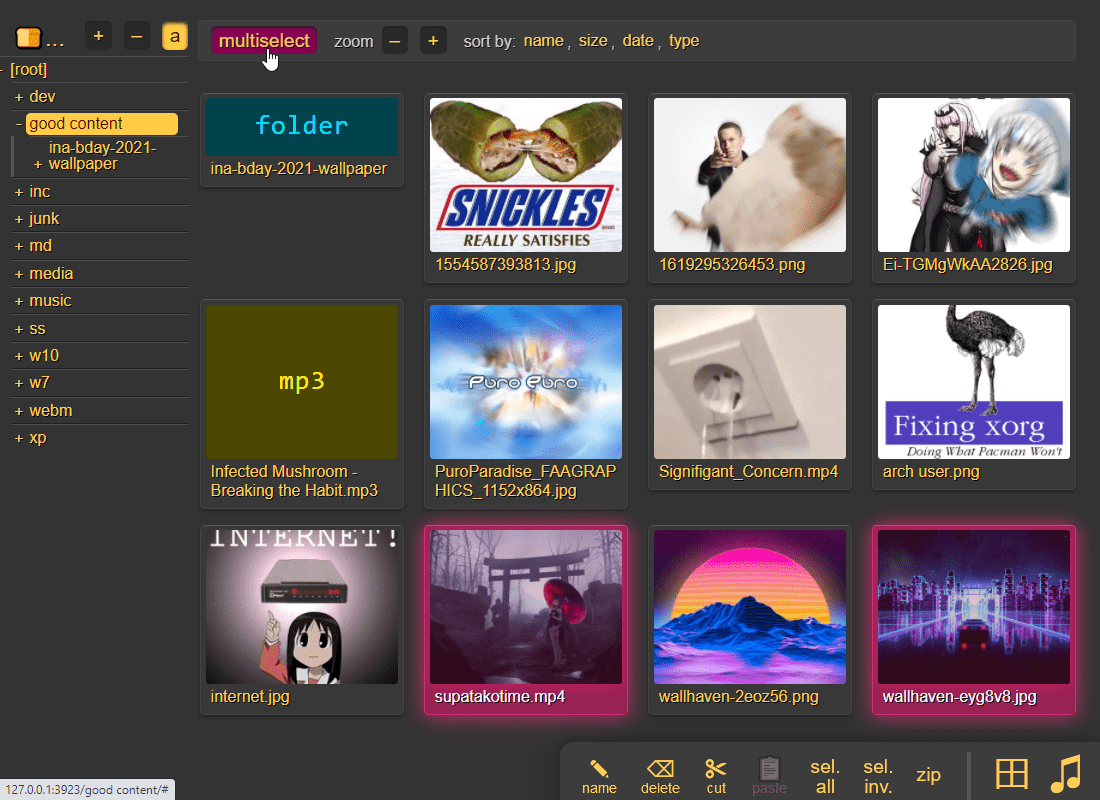

## thumbnails

|

||||

|

||||

press `g` to toggle image/video thumbnails instead of the file listing

|

||||

press `g` to toggle grid-view instead of the file listing, and `t` toggles icons / thumbnails

|

||||

|

||||

|

||||

|

||||

@@ -308,7 +375,7 @@ in the grid/thumbnail view, if the audio player panel is open, songs will start

|

||||

|

||||

download folders (or file selections) as `zip` or `tar` files

|

||||

|

||||

select which type of archive you want in the browser settings tab:

|

||||

select which type of archive you want in the `[⚙️] config` tab:

|

||||

|

||||

| name | url-suffix | description |

|

||||

|--|--|--|

|

||||

@@ -330,11 +397,13 @@ you can also zip a selection of files or folders by clicking them in the browser

|

||||

|

||||

## uploading

|

||||

|

||||

web-browsers can upload using `bup` and `up2k`:

|

||||

drag files/folders into the web-browser to upload

|

||||

|

||||

this initiates an upload using `up2k`; there are two uploaders available:

|

||||

* `[🎈] bup`, the basic uploader, supports almost every browser since netscape 4.0

|

||||

* `[🚀] up2k`, the fancy one

|

||||

|

||||

you can undo/delete uploads using `[🧯]` [unpost](#unpost)

|

||||

you can also undo/delete uploads by using `[🧯]` [unpost](#unpost)

|

||||

|

||||

up2k has several advantages:

|

||||

* you can drop folders into the browser (files are added recursively)

|

||||

@@ -355,36 +424,41 @@ see [up2k](#up2k) for details on how it works

|

||||

the up2k UI is the epitome of polished inutitive experiences:

|

||||

* "parallel uploads" specifies how many chunks to upload at the same time

|

||||

* `[🏃]` analysis of other files should continue while one is uploading

|

||||

* `[💭]` ask for confirmation before files are added to the list

|

||||

* `[💭]` ask for confirmation before files are added to the queue

|

||||

* `[💤]` sync uploading between other copyparty browser-tabs so only one is active

|

||||

* `[🔎]` switch between upload and file-search mode

|

||||

* `[🔎]` switch between upload and [file-search](#file-search) mode

|

||||

* ignore `[🔎]` if you add files by dragging them into the browser

|

||||

|

||||

and then theres the tabs below it,

|

||||

* `[ok]` is uploads which completed successfully

|

||||

* `[ng]` is the uploads which failed / got rejected (already exists, ...)

|

||||

* `[ok]` is the files which completed successfully

|

||||

* `[ng]` is the ones that failed / got rejected (already exists, ...)

|

||||

* `[done]` shows a combined list of `[ok]` and `[ng]`, chronological order

|

||||

* `[busy]` files which are currently hashing, pending-upload, or uploading

|

||||

* plus up to 3 entries each from `[done]` and `[que]` for context

|

||||

* `[que]` is all the files that are still queued

|

||||

|

||||

note that since up2k has to read each file twice, `[🎈 bup]` can *theoretically* be up to 2x faster in some extreme cases (files bigger than your ram, combined with an internet connection faster than the read-speed of your HDD, or if you're uploading from a cuo2duo)

|

||||

|

||||

if you are resuming a massive upload and want to skip hashing the files which already finished, you can enable `turbo` in the `[⚙️] config` tab, but please read the tooltip on that button

|

||||

|

||||

|

||||

### file-search

|

||||

|

||||

drop files/folders into up2k to see if they exist on the server

|

||||

dropping files into the browser also lets you see if they exist on the server

|

||||

|

||||

|

||||

|

||||

in the `[🚀 up2k]` tab, after toggling the `[🔎]` switch green, any files/folders you drop onto the dropzone will be hashed on the client-side. Each hash is sent to the server which checks if that file exists somewhere

|

||||

when you drag/drop files into the browser, you will see two dropzones: `Upload` and `Search`

|

||||

|

||||

> on a phone? toggle the `[🔎]` switch green before tapping the big yellow Search button to select your files

|

||||

|

||||

the files will be hashed on the client-side, and each hash is sent to the server, which checks if that file exists somewhere

|

||||

|

||||

files go into `[ok]` if they exist (and you get a link to where it is), otherwise they land in `[ng]`

|

||||

* the main reason filesearch is combined with the uploader is cause the code was too spaghetti to separate it out somewhere else, this is no longer the case but now i've warmed up to the idea too much

|

||||

|

||||

adding the same file multiple times is blocked, so if you first search for a file and then decide to upload it, you have to click the `[cleanup]` button to discard `[done]` files (or just refresh the page)

|

||||

|

||||

note that since up2k has to read the file twice, `[🎈 bup]` can be up to 2x faster in extreme cases (if your internet connection is faster than the read-speed of your HDD)

|

||||

|

||||

up2k has saved a few uploads from becoming corrupted in-transfer already; caught an android phone on wifi redhanded in wireshark with a bitflip, however bup with https would *probably* have noticed as well (thanks to tls also functioning as an integrity check)

|

||||

|

||||

|

||||

### unpost

|

||||

|

||||

@@ -399,12 +473,22 @@ you can unpost even if you don't have regular move/delete access, however only f

|

||||

|

||||

cut/paste, rename, and delete files/folders (if you have permission)

|

||||

|

||||

file selection: click somewhere on the line (not the link itsef), then:

|

||||

* `space` to toggle

|

||||

* `up/down` to move

|

||||

* `shift-up/down` to move-and-select

|

||||

* `ctrl-shift-up/down` to also scroll

|

||||

|

||||

* cut: select some files and `ctrl-x`

|

||||

* paste: `ctrl-v` in another folder

|

||||

* rename: `F2`

|

||||

|

||||

you can move files across browser tabs (cut in one tab, paste in another)

|

||||

|

||||

|

||||

## batch rename

|

||||

|

||||

select some files and press F2 to bring up the rename UI

|

||||

select some files and press `F2` to bring up the rename UI

|

||||

|

||||

|

||||

|

||||

@@ -464,6 +548,12 @@ and there are *two* editors

|

||||

|

||||

* if you are using media hotkeys to switch songs and are getting tired of seeing the OSD popup which Windows doesn't let you disable, consider https://ocv.me/dev/?media-osd-bgone.ps1

|

||||

|

||||

* click the bottom-left `π` to open a javascript prompt for debugging

|

||||

|

||||

* files named `.prologue.html` / `.epilogue.html` will be rendered before/after directory listings unless `--no-logues`

|

||||

|

||||

* files named `README.md` / `readme.md` will be rendered after directory listings unless `--no-readme` (but `.epilogue.html` takes precedence)

|

||||

|

||||

|

||||

## searching

|

||||

|

||||

@@ -486,36 +576,39 @@ add the argument `-e2ts` to also scan/index tags from music files, which brings

|

||||

|

||||

## file indexing

|

||||

|

||||

file indexing relies on two databases, the up2k filetree (`-e2d`) and the metadata tags (`-e2t`). Configuration can be done through arguments, volume flags, or a mix of both.

|

||||

file indexing relies on two database tables, the up2k filetree (`-e2d`) and the metadata tags (`-e2t`), stored in `.hist/up2k.db`. Configuration can be done through arguments, volume flags, or a mix of both.

|

||||

|

||||

through arguments:

|

||||

* `-e2d` enables file indexing on upload

|

||||

* `-e2ds` scans writable folders for new files on startup

|

||||

* `-e2dsa` scans all mounted volumes (including readonly ones)

|

||||

* `-e2ds` also scans writable folders for new files on startup

|

||||

* `-e2dsa` also scans all mounted volumes (including readonly ones)

|

||||

* `-e2t` enables metadata indexing on upload

|

||||

* `-e2ts` scans for tags in all files that don't have tags yet

|

||||

* `-e2tsr` deletes all existing tags, does a full reindex

|

||||

* `-e2ts` also scans for tags in all files that don't have tags yet

|

||||

* `-e2tsr` also deletes all existing tags, doing a full reindex

|

||||

|

||||

the same arguments can be set as volume flags, in addition to `d2d` and `d2t` for disabling:

|

||||

* `-v ~/music::r:c,e2dsa:c,e2tsr` does a full reindex of everything on startup

|

||||

* `-v ~/music::r:c,e2dsa,e2tsr` does a full reindex of everything on startup

|

||||

* `-v ~/music::r:c,d2d` disables **all** indexing, even if any `-e2*` are on

|

||||

* `-v ~/music::r:c,d2t` disables all `-e2t*` (tags), does not affect `-e2d*`

|

||||

|

||||

note:

|

||||

* the parser can finally handle `c,e2dsa,e2tsr` so you no longer have to `c,e2dsa:c,e2tsr`

|

||||

* `e2tsr` is probably always overkill, since `e2ds`/`e2dsa` would pick up any file modifications and `e2ts` would then reindex those, unless there is a new copyparty version with new parsers and the release note says otherwise

|

||||

* the rescan button in the admin panel has no effect unless the volume has `-e2ds` or higher

|

||||

|

||||

you can choose to only index filename/path/size/last-modified (and not the hash of the file contents) by setting `--no-hash` or the volume-flag `:c,dhash`, this has the following consequences:

|

||||

to save some time, you can provide a regex pattern for filepaths to only index by filename/path/size/last-modified (and not the hash of the file contents) by setting `--no-hash \.iso$` or the volume-flag `:c,nohash=\.iso$`, this has the following consequences:

|

||||

* initial indexing is way faster, especially when the volume is on a network disk

|

||||

* makes it impossible to [file-search](#file-search)

|

||||

* if someone uploads the same file contents, the upload will not be detected as a dupe, so it will not get symlinked or rejected

|

||||

|

||||

if you set `--no-hash`, you can enable hashing for specific volumes using flag `:c,ehash`

|

||||

similarly, you can fully ignore files/folders using `--no-idx [...]` and `:c,noidx=\.iso$`

|

||||

|

||||

if you set `--no-hash [...]` globally, you can enable hashing for specific volumes using flag `:c,nohash=`

|

||||

|

||||

|

||||

## upload rules

|

||||

|

||||

set upload rules using volume flags, some examples:

|

||||

set upload rules using volume flags, some examples:

|

||||

|

||||

* `:c,sz=1k-3m` sets allowed filesize between 1 KiB and 3 MiB inclusive (suffixes: b, k, m, g)

|

||||

* `:c,nosub` disallow uploading into subdirectories; goes well with `rotn` and `rotf`:

|

||||

@@ -534,9 +627,7 @@ you can also set transaction limits which apply per-IP and per-volume, but these

|

||||

|

||||

## compress uploads

|

||||

|

||||

files can be autocompressed on upload

|

||||

|

||||

compression is either on user-request (if config allows) or forced by server-config

|

||||

files can be autocompressed on upload, either on user-request (if config allows) or forced by server-config

|

||||

|

||||

* volume flag `gz` allows gz compression

|

||||

* volume flag `xz` allows lzma compression

|

||||

@@ -557,7 +648,7 @@ some examples,

|

||||

|

||||

## database location

|

||||

|

||||

can be stored in-volume (default) or elsewhere

|

||||

in-volume (`.hist/up2k.db`, default) or somewhere else

|

||||

|

||||

copyparty creates a subfolder named `.hist` inside each volume where it stores the database, thumbnails, and some other stuff

|

||||

|

||||

@@ -579,13 +670,13 @@ set `-e2t` to index tags on upload

|

||||

|

||||

if you add/remove a tag from `mte` you will need to run with `-e2tsr` once to rebuild the database, otherwise only new files will be affected

|

||||

|

||||

but instead of using `-mte`, `-mth` is a better way to hide tags in the browser: these tags will not be displayed by default, but they still get indexed and become searchable, and users can choose to unhide them in the settings pane

|

||||

but instead of using `-mte`, `-mth` is a better way to hide tags in the browser: these tags will not be displayed by default, but they still get indexed and become searchable, and users can choose to unhide them in the `[⚙️] config` pane

|

||||

|

||||

`-mtm` can be used to add or redefine a metadata mapping, say you have media files with `foo` and `bar` tags and you want them to display as `qux` in the browser (preferring `foo` if both are present), then do `-mtm qux=foo,bar` and now you can `-mte artist,title,qux`

|

||||

|

||||

tags that start with a `.` such as `.bpm` and `.dur`(ation) indicate numeric value

|

||||

|

||||

see the beautiful mess of a dictionary in [mtag.py](https://github.com/9001/copyparty/blob/master/copyparty/mtag.py) for the default mappings (should cover mp3,opus,flac,m4a,wav,aif,)

|

||||

see the beautiful mess of a dictionary in [mtag.py](https://github.com/9001/copyparty/blob/hovudstraum/copyparty/mtag.py) for the default mappings (should cover mp3,opus,flac,m4a,wav,aif,)

|

||||

|

||||

`--no-mutagen` disables Mutagen and uses FFprobe instead, which...

|

||||

* is about 20x slower than Mutagen

|

||||

@@ -611,6 +702,25 @@ copyparty can invoke external programs to collect additional metadata for files

|

||||

* `-mtp arch,built,ver,orig=an,eexe,edll,~/bin/exe.py` runs `~/bin/exe.py` to get properties about windows-binaries only if file is not audio (`an`) and file extension is exe or dll

|

||||

|

||||

|

||||

## upload events

|

||||

|

||||

trigger a script/program on each upload like so:

|

||||

|

||||

```

|

||||

-v /mnt/inc:inc:w:c,mte=+a1:c,mtp=a1=ad,/usr/bin/notify-send

|

||||

```

|

||||

|

||||

so filesystem location `/mnt/inc` shared at `/inc`, write-only for everyone, appending `a1` to the list of tags to index, and using `/usr/bin/notify-send` to "provide" that tag

|

||||

|

||||

that'll run the command `notify-send` with the path to the uploaded file as the first and only argument (so on linux it'll show a notification on-screen)

|

||||

|

||||

note that it will only trigger on new unique files, not dupes

|

||||

|

||||

and it will occupy the parsing threads, so fork anything expensive, or if you want to intentionally queue/singlethread you can combine it with `--no-mtag-mt`

|

||||

|

||||

if this becomes popular maybe there should be a less janky way to do it actually

|

||||

|

||||

|

||||

## complete examples

|

||||

|

||||

* read-only music server with bpm and key scanning

|

||||

@@ -641,29 +751,27 @@ TLDR: yes

|

||||

| image viewer | - | yep | yep | yep | yep | yep | yep | yep |

|

||||

| video player | - | yep | yep | yep | yep | yep | yep | yep |

|

||||

| markdown editor | - | - | yep | yep | yep | yep | yep | yep |

|

||||

| markdown viewer | - | - | yep | yep | yep | yep | yep | yep |

|

||||

| markdown viewer | - | yep | yep | yep | yep | yep | yep | yep |

|

||||

| play mp3/m4a | - | yep | yep | yep | yep | yep | yep | yep |

|

||||

| play ogg/opus | - | - | - | - | yep | yep | `*3` | yep |

|

||||

| **= feature =** | ie6 | ie9 | ie10 | ie11 | ff 52 | c 49 | iOS | Andr |

|

||||

|

||||

* internet explorer 6 to 8 behave the same

|

||||

* firefox 52 and chrome 49 are the last winxp versions

|

||||

* `*1` yes, but extremely slow (ie10: 1 MiB/s, ie11: 270 KiB/s)

|

||||

* firefox 52 and chrome 49 are the final winxp versions

|

||||

* `*1` yes, but extremely slow (ie10: `1 MiB/s`, ie11: `270 KiB/s`)

|

||||

* `*2` causes a full-page refresh on each navigation

|

||||

* `*3` using a wasm decoder which can sometimes get stuck and consumes a bit more power

|

||||

* `*3` using a wasm decoder which consumes a bit more power

|

||||

|

||||

quick summary of more eccentric web-browsers trying to view a directory index:

|

||||

|

||||

| browser | will it blend |

|

||||

| ------- | ------------- |

|

||||

| **safari** (14.0.3/macos) | is chrome with janky wasm, so playing opus can deadlock the javascript engine |

|

||||

| **safari** (14.0.1/iOS) | same as macos, except it recovers from the deadlocks if you poke it a bit |

|

||||

| **links** (2.21/macports) | can browse, login, upload/mkdir/msg |

|

||||

| **lynx** (2.8.9/macports) | can browse, login, upload/mkdir/msg |

|

||||

| **w3m** (0.5.3/macports) | can browse, login, upload at 100kB/s, mkdir/msg |

|

||||

| **netsurf** (3.10/arch) | is basically ie6 with much better css (javascript has almost no effect) |

|

||||

| **opera** (11.60/winxp) | OK: thumbnails, image-viewer, zip-selection, rename/cut/paste. NG: up2k, navpane, markdown, audio |

|

||||

| **ie4** and **netscape** 4.0 | can browse (text is yellow on white), upload with `?b=u` |

|

||||

| **ie4** and **netscape** 4.0 | can browse, upload with `?b=u` |

|

||||

| **SerenityOS** (7e98457) | hits a page fault, works with `?b=u`, file upload not-impl |

|

||||

|

||||

|

||||

@@ -683,6 +791,14 @@ interact with copyparty using non-browser clients

|

||||

* `chunk(){ curl -b cppwd=wark -T- http://127.0.0.1:3923/;}`

|

||||

`chunk <movie.mkv`

|

||||

|

||||

* bash: when curl and wget is not available or too boring

|

||||

* `(printf 'PUT /junk?pw=wark HTTP/1.1\r\n\r\n'; cat movie.mkv) | nc 127.0.0.1 3923`

|

||||

* `(printf 'PUT / HTTP/1.1\r\n\r\n'; cat movie.mkv) >/dev/tcp/127.0.0.1/3923`

|

||||

|

||||

* python: [up2k.py](https://github.com/9001/copyparty/blob/hovudstraum/bin/up2k.py) is a command-line up2k client [(webm)](https://ocv.me/stuff/u2cli.webm)

|

||||

* file uploads, file-search, autoresume of aborted/broken uploads

|

||||

* see [./bin/README.md#up2kpy](bin/README.md#up2kpy)

|

||||

|

||||

* FUSE: mount a copyparty server as a local filesystem

|

||||

* cross-platform python client available in [./bin/](bin/)

|

||||

* [rclone](https://rclone.org/) as client can give ~5x performance, see [./docs/rclone.md](docs/rclone.md)

|

||||

@@ -694,6 +810,8 @@ copyparty returns a truncated sha512sum of your PUT/POST as base64; you can gene

|

||||

b512(){ printf "$((sha512sum||shasum -a512)|sed -E 's/ .*//;s/(..)/\\x\1/g')"|base64|tr '+/' '-_'|head -c44;}

|

||||

b512 <movie.mkv

|

||||

|

||||

you can provide passwords using cookie 'cppwd=hunter2', as a url query `?pw=hunter2`, or with basic-authentication (either as the username or password)

|

||||

|

||||

|

||||

# up2k

|

||||

|

||||

@@ -710,19 +828,32 @@ quick outline of the up2k protocol, see [uploading](#uploading) for the web-clie

|

||||

* server writes chunks into place based on the hash

|

||||

* client does another handshake with the hashlist; server replies with OK or a list of chunks to reupload

|

||||

|

||||

up2k has saved a few uploads from becoming corrupted in-transfer already; caught an android phone on wifi redhanded in wireshark with a bitflip, however bup with https would *probably* have noticed as well (thanks to tls also functioning as an integrity check)

|

||||

|

||||

|

||||

## why chunk-hashes

|

||||

|

||||

a single sha512 would be better, right?

|

||||

|

||||

this is due to `crypto.subtle` not providing a streaming api (or the option to seed the sha512 hasher with a starting hash)

|

||||

|

||||

as a result, the hashes are much less useful than they could have been (search the server by sha512, provide the sha512 in the response http headers, ...)

|

||||

|

||||

hashwasm would solve the streaming issue but reduces hashing speed for sha512 (xxh128 does 6 GiB/s), and it would make old browsers and [iphones](https://bugs.webkit.org/show_bug.cgi?id=228552) unsupported

|

||||

|

||||

|

||||

# performance

|

||||

|

||||

defaults are good for most cases

|

||||

defaults are usually fine - expect `8 GiB/s` download, `1 GiB/s` upload

|

||||

|

||||

you can ignore the `cannot efficiently use multiple CPU cores` message, it's very unlikely to be a problem

|

||||

you can ignore the `cannot efficiently use multiple CPU cores` message, very unlikely to be a problem

|

||||

|

||||

below are some tweaks roughly ordered by usefulness:

|

||||

|

||||

* `-q` disables logging and can help a bunch, even when combined with `-lo` to redirect logs to file

|

||||

* `--http-only` or `--https-only` (unless you want to support both protocols) will reduce the delay before a new connection is established

|

||||

* `--hist` pointing to a fast location (ssd) will make directory listings and searches faster when `-e2d` or `-e2t` is set

|

||||

* `--no-hash` when indexing a network-disk if you don't care about the actual filehashes and only want the names/tags searchable

|

||||

* `--no-hash .` when indexing a network-disk if you don't care about the actual filehashes and only want the names/tags searchable

|

||||

* `-j` enables multiprocessing (actual multithreading) and can make copyparty perform better in cpu-intensive workloads, for example:

|

||||

* huge amount of short-lived connections

|

||||

* really heavy traffic (downloads/uploads)

|

||||

@@ -730,6 +861,49 @@ below are some tweaks roughly ordered by usefulness:

|

||||

...however it adds an overhead to internal communication so it might be a net loss, see if it works 4 u

|

||||

|

||||

|

||||

# security

|

||||

|

||||

some notes on hardening

|

||||

|

||||

on public copyparty instances with anonymous upload enabled:

|

||||

|

||||

* users can upload html/css/js which will evaluate for other visitors in a few ways,

|

||||

* unless `--no-readme` is set: by uploading/modifying a file named `readme.md`

|

||||

* if `move` access is granted AND none of `--no-logues`, `--no-dot-mv`, `--no-dot-ren` is set: by uploading some .html file and renaming it to `.epilogue.html` (uploading it directly is blocked)

|

||||

|

||||

other misc:

|

||||

|

||||

* you can disable directory listings by giving permission `g` instead of `r`, only accepting direct URLs to files

|

||||

* combine this with volume-flag `c,fk` to generate per-file accesskeys; users which have full read-access will then see URLs with `?k=...` appended to the end, and `g` users must provide that URL including the correct key to avoid a 404

|

||||

|

||||

|

||||

## gotchas

|

||||

|

||||

behavior that might be unexpected

|

||||

|

||||

* users without read-access to a folder can still see the `.prologue.html` / `.epilogue.html` / `README.md` contents, for the purpose of showing a description on how to use the uploader for example

|

||||

|

||||

|

||||

# recovering from crashes

|

||||

|

||||

## client crashes

|

||||

|

||||

### frefox wsod

|

||||

|

||||

firefox 87 can crash during uploads -- the entire browser goes, including all other browser tabs, everything turns white

|

||||

|

||||

however you can hit `F12` in the up2k tab and use the devtools to see how far you got in the uploads:

|

||||

|

||||

* get a complete list of all uploads, organized by statuts (ok / no-good / busy / queued):

|

||||

`var tabs = { ok:[], ng:[], bz:[], q:[] }; for (var a of up2k.ui.tab) tabs[a.in].push(a); tabs`

|

||||

|

||||

* list of filenames which failed:

|

||||

`var ng = []; for (var a of up2k.ui.tab) if (a.in != 'ok') ng.push(a.hn.split('<a href=\"').slice(-1)[0].split('\">')[0]); ng`

|

||||

|

||||

* send the list of filenames to copyparty for safekeeping:

|

||||

`await fetch('/inc', {method:'PUT', body:JSON.stringify(ng,null,1)})`

|

||||

|

||||

|

||||

# dependencies

|

||||

|

||||

mandatory deps:

|

||||

@@ -744,17 +918,11 @@ enable music tags:

|

||||

* either `mutagen` (fast, pure-python, skips a few tags, makes copyparty GPL? idk)

|

||||

* or `ffprobe` (20x slower, more accurate, possibly dangerous depending on your distro and users)

|

||||

|

||||

enable thumbnails of images:

|

||||

* `Pillow` (requires py2.7 or py3.5+)

|

||||

|

||||

enable thumbnails of videos:

|

||||

* `ffmpeg` and `ffprobe` somewhere in `$PATH`

|

||||

|

||||

enable thumbnails of HEIF pictures:

|

||||

* `pyheif-pillow-opener` (requires Linux or a C compiler)

|

||||

|

||||

enable thumbnails of AVIF pictures:

|

||||

* `pillow-avif-plugin`

|

||||

enable [thumbnails](#thumbnails) of...

|

||||

* **images:** `Pillow` (requires py2.7 or py3.5+)

|

||||

* **videos:** `ffmpeg` and `ffprobe` somewhere in `$PATH`

|

||||

* **HEIF pictures:** `pyheif-pillow-opener` (requires Linux or a C compiler)

|

||||

* **AVIF pictures:** `pillow-avif-plugin`

|

||||

|

||||

|

||||

## install recommended deps

|

||||

@@ -791,8 +959,10 @@ if you don't need all the features, you can repack the sfx and save a bunch of s

|

||||

* `223k` after `./scripts/make-sfx.sh re no-ogv no-cm`

|

||||

|

||||

the features you can opt to drop are

|

||||

* `ogv`.js, the opus/vorbis decoder which is needed by apple devices to play foss audio files

|

||||

* `cm`/easymde, the "fancy" markdown editor

|

||||

* `ogv`.js, the opus/vorbis decoder which is needed by apple devices to play foss audio files, saves ~192k

|

||||

* `cm`/easymde, the "fancy" markdown editor, saves ~92k

|

||||

* `fnt`, source-code-pro, the monospace font, saves ~9k

|

||||

* `dd`, the custom mouse cursor for the media player tray tab, saves ~2k

|

||||

|

||||

for the `re`pack to work, first run one of the sfx'es once to unpack it

|

||||

|

||||

@@ -828,7 +998,7 @@ pip install black bandit pylint flake8 # vscode tooling

|

||||

|

||||

## just the sfx

|

||||

|

||||

grab the web-dependencies from a previous sfx (unless you need to modify something in those):

|

||||

first grab the web-dependencies from a previous sfx (assuming you don't need to modify something in those):

|

||||

|

||||

```sh

|

||||

rm -rf copyparty/web/deps

|

||||

@@ -854,8 +1024,8 @@ in the `scripts` folder:

|

||||

|

||||

* run `make -C deps-docker` to build all dependencies

|

||||

* `git tag v1.2.3 && git push origin --tags`

|

||||

* create github release with `make-tgz-release.sh`

|

||||

* upload to pypi with `make-pypi-release.(sh|bat)`

|

||||

* create github release with `make-tgz-release.sh`

|

||||

* create sfx with `make-sfx.sh`

|

||||

|

||||

|

||||

@@ -863,8 +1033,7 @@ in the `scripts` folder:

|

||||

|

||||

roughly sorted by priority

|

||||

|

||||

* hls framework for Someone Else to drop code into :^)

|

||||

* readme.md as epilogue

|

||||

* nothing! currently

|

||||

|

||||

|

||||

## discarded ideas

|

||||

@@ -892,3 +1061,5 @@ roughly sorted by priority

|

||||

* indexedDB for hashes, cfg enable/clear/sz, 2gb avail, ~9k for 1g, ~4k for 100m, 500k items before autoeviction

|

||||

* blank hashlist when up-ok to skip handshake

|

||||

* too many confusing side-effects

|

||||

* hls framework for Someone Else to drop code into :^)

|

||||

* probably not, too much stuff to consider -- seeking, start at offset, task stitching (probably np-hard), conditional passthru, rate-control (especially multi-consumer), session keepalive, cache mgmt...

|

||||

|

||||

@@ -1,3 +1,11 @@

|

||||

# [`up2k.py`](up2k.py)

|

||||

* command-line up2k client [(webm)](https://ocv.me/stuff/u2cli.webm)

|

||||

* file uploads, file-search, autoresume of aborted/broken uploads

|

||||

* faster than browsers

|

||||

* early beta, if something breaks just restart it

|

||||

|

||||

|

||||

|

||||

# [`copyparty-fuse.py`](copyparty-fuse.py)

|

||||

* mount a copyparty server as a local filesystem (read-only)

|

||||

* **supports Windows!** -- expect `194 MiB/s` sequential read

|

||||

@@ -47,6 +55,7 @@ you could replace winfsp with [dokan](https://github.com/dokan-dev/dokany/releas

|

||||

* copyparty can Popen programs like these during file indexing to collect additional metadata

|

||||

|

||||

|

||||

|

||||

# [`dbtool.py`](dbtool.py)

|

||||

upgrade utility which can show db info and help transfer data between databases, for example when a new version of copyparty is incompatible with the old DB and automatically rebuilds the DB from scratch, but you have some really expensive `-mtp` parsers and want to copy over the tags from the old db

|

||||

|

||||

@@ -63,6 +72,7 @@ cd /mnt/nas/music/.hist

|

||||

```

|

||||

|

||||

|

||||

|

||||

# [`prisonparty.sh`](prisonparty.sh)

|

||||

* run copyparty in a chroot, preventing any accidental file access

|

||||

* creates bindmounts for /bin, /lib, and so on, see `sysdirs=`

|

||||

|

||||

@@ -22,7 +22,7 @@ dependencies:

|

||||

|

||||

note:

|

||||

you probably want to run this on windows clients:

|

||||

https://github.com/9001/copyparty/blob/master/contrib/explorer-nothumbs-nofoldertypes.reg

|

||||

https://github.com/9001/copyparty/blob/hovudstraum/contrib/explorer-nothumbs-nofoldertypes.reg

|

||||

|

||||

get server cert:

|

||||

awk '/-BEGIN CERTIFICATE-/ {a=1} a; /-END CERTIFICATE-/{exit}' <(openssl s_client -connect 127.0.0.1:3923 </dev/null 2>/dev/null) >cert.pem

|

||||

@@ -71,7 +71,7 @@ except:

|

||||

elif MACOS:

|

||||

libfuse = "install https://osxfuse.github.io/"

|

||||

else:

|

||||

libfuse = "apt install libfuse\n modprobe fuse"

|

||||

libfuse = "apt install libfuse3-3\n modprobe fuse"

|

||||

|

||||

print(

|

||||

"\n could not import fuse; these may help:"

|

||||

@@ -393,15 +393,16 @@ class Gateway(object):

|

||||

|

||||

rsp = json.loads(rsp.decode("utf-8"))

|

||||

ret = []

|

||||

for is_dir, nodes in [[True, rsp["dirs"]], [False, rsp["files"]]]:

|

||||

for statfun, nodes in [

|

||||

[self.stat_dir, rsp["dirs"]],

|

||||

[self.stat_file, rsp["files"]],

|

||||

]:

|

||||

for n in nodes:

|

||||

fname = unquote(n["href"]).rstrip(b"/")

|

||||

fname = fname.decode("wtf-8")

|

||||

fname = unquote(n["href"].split("?")[0]).rstrip(b"/").decode("wtf-8")

|

||||

if bad_good:

|

||||

fname = enwin(fname)

|

||||

|

||||

fun = self.stat_dir if is_dir else self.stat_file

|

||||

ret.append([fname, fun(n["ts"], n["sz"]), 0])

|

||||

ret.append([fname, statfun(n["ts"], n["sz"]), 0])

|

||||

|

||||

return ret

|

||||

|

||||

|

||||

@@ -1,11 +1,19 @@

|

||||

standalone programs which take an audio file as argument

|

||||

|

||||

**NOTE:** these all require `-e2ts` to be functional, meaning you need to do at least one of these: `apt install ffmpeg` or `pip3 install mutagen`

|

||||

|

||||

some of these rely on libraries which are not MIT-compatible

|

||||

|

||||

* [audio-bpm.py](./audio-bpm.py) detects the BPM of music using the BeatRoot Vamp Plugin; imports GPL2

|

||||

* [audio-key.py](./audio-key.py) detects the melodic key of music using the Mixxx fork of keyfinder; imports GPL3

|

||||

* [media-hash.py](./media-hash.py) generates checksums for audio and video streams; uses FFmpeg (LGPL or GPL)

|

||||

|

||||

these do not have any problematic dependencies:

|

||||

|

||||

* [cksum.py](./cksum.py) computes various checksums

|

||||

* [exe.py](./exe.py) grabs metadata from .exe and .dll files (example for retrieving multiple tags with one parser)

|

||||

* [wget.py](./wget.py) lets you download files by POSTing URLs to copyparty

|

||||

|

||||

|

||||

# dependencies

|

||||

|

||||

|

||||

@@ -25,6 +25,7 @@ def det(tf):

|

||||

"-v", "fatal",

|

||||

"-ss", "13",

|

||||

"-y", "-i", fsenc(sys.argv[1]),

|

||||

"-map", "0:a:0",

|

||||

"-ac", "1",

|

||||

"-ar", "22050",

|

||||

"-t", "300",

|

||||

|

||||

@@ -28,6 +28,7 @@ def det(tf):

|

||||

"-hide_banner",

|

||||

"-v", "fatal",

|

||||

"-y", "-i", fsenc(sys.argv[1]),

|

||||

"-map", "0:a:0",

|

||||

"-t", "300",

|

||||

"-sample_fmt", "s16",

|

||||

tf

|

||||

|

||||

89

bin/mtag/cksum.py

Executable file

89

bin/mtag/cksum.py

Executable file

@@ -0,0 +1,89 @@

|

||||

#!/usr/bin/env python3

|

||||

|

||||

import sys

|

||||

import json

|

||||

import zlib

|

||||

import struct

|

||||

import base64

|

||||

import hashlib

|

||||

|

||||

try:

|

||||

from copyparty.util import fsenc

|

||||

except:

|

||||

|

||||

def fsenc(p):

|

||||

return p

|

||||

|

||||

|

||||

"""

|

||||

calculates various checksums for uploads,

|

||||

usage: -mtp crc32,md5,sha1,sha256b=bin/mtag/cksum.py

|

||||

"""

|

||||

|

||||

|

||||

def main():

|

||||

config = "crc32 md5 md5b sha1 sha1b sha256 sha256b sha512/240 sha512b/240"

|

||||

# b suffix = base64 encoded

|

||||

# slash = truncate to n bits

|

||||

|

||||

known = {

|

||||

"md5": hashlib.md5,

|

||||

"sha1": hashlib.sha1,

|

||||

"sha256": hashlib.sha256,

|

||||

"sha512": hashlib.sha512,

|

||||

}

|

||||

config = config.split()

|

||||

hashers = {

|

||||

k: v()

|

||||

for k, v in known.items()

|

||||

if k in [x.split("/")[0].rstrip("b") for x in known]

|

||||

}

|

||||

crc32 = 0 if "crc32" in config else None

|

||||

|

||||