mirror of

https://github.com/9001/copyparty.git

synced 2025-10-24 08:33:58 +00:00

Compare commits

610 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

f3dfd24c92 | ||

|

|

fa0a7f50bb | ||

|

|

44a78a7e21 | ||

|

|

6b75cbf747 | ||

|

|

e7b18ab9fe | ||

|

|

aa12830015 | ||

|

|

f156e00064 | ||

|

|

d53c212516 | ||

|

|

ca27f8587c | ||

|

|

88ce008e16 | ||

|

|

081d2cc5d7 | ||

|

|

60ac68d000 | ||

|

|

fbe656957d | ||

|

|

5534c78c17 | ||

|

|

a45a53fdce | ||

|

|

972a56e738 | ||

|

|

5e03b3ca38 | ||

|

|

1078d933b4 | ||

|

|

d6bf300d80 | ||

|

|

a359d64d44 | ||

|

|

22396e8c33 | ||

|

|

5ded5a4516 | ||

|

|

79c7639aaf | ||

|

|

5bbf875385 | ||

|

|

5e159432af | ||

|

|

1d6ae409f6 | ||

|

|

9d729d3d1a | ||

|

|

4dd5d4e1b7 | ||

|

|

acd8149479 | ||

|

|

b97a1088fa | ||

|

|

b77bed3324 | ||

|

|

a2b7c85a1f | ||

|

|

b28533f850 | ||

|

|

bd8c7e538a | ||

|

|

89e48cff24 | ||

|

|

ae90a7b7b6 | ||

|

|

6fc1be04da | ||

|

|

0061d29534 | ||

|

|

a891f34a93 | ||

|

|

d6a1e62a95 | ||

|

|

cda36ea8b4 | ||

|

|

909a76434a | ||

|

|

39348ef659 | ||

|

|

99d30edef3 | ||

|

|

b63ab15bf9 | ||

|

|

485cb4495c | ||

|

|

df018eb1f2 | ||

|

|

49aa47a9b8 | ||

|

|

7d20eb202a | ||

|

|

c533da9129 | ||

|

|

5cba31a814 | ||

|

|

1d824cb26c | ||

|

|

83b903d60e | ||

|

|

9c8ccabe8e | ||

|

|

b1f2c4e70d | ||

|

|

273ca0c8da | ||

|

|

d6f516b34f | ||

|

|

83127858ca | ||

|

|

d89329757e | ||

|

|

49ffec5320 | ||

|

|

2eaae2b66a | ||

|

|

ea4441e25c | ||

|

|

e5f34042f9 | ||

|

|

271096874a | ||

|

|

8efd780a72 | ||

|

|

41bcf7308d | ||

|

|

d102bb3199 | ||

|

|

d0bed95415 | ||

|

|

2528729971 | ||

|

|

292c18b3d0 | ||

|

|

0be7c5e2d8 | ||

|

|

eb5aaddba4 | ||

|

|

d8fd82bcb5 | ||

|

|

97be495861 | ||

|

|

8b53c159fc | ||

|

|

81e281f703 | ||

|

|

3948214050 | ||

|

|

c5e9a643e7 | ||

|

|

d25881d5c3 | ||

|

|

38d8d9733f | ||

|

|

118ebf668d | ||

|

|

a86f09fa46 | ||

|

|

dd4fb35c8f | ||

|

|

621eb4cf95 | ||

|

|

deea66ad0b | ||

|

|

bf99445377 | ||

|

|

7b54a63396 | ||

|

|

0fcb015f9a | ||

|

|

0a22b1ffb6 | ||

|

|

68cecc52ab | ||

|

|

53657ccfff | ||

|

|

96223fda01 | ||

|

|

374ff3433e | ||

|

|

5d63949e98 | ||

|

|

6b065d507d | ||

|

|

e79997498a | ||

|

|

f7ee02ec35 | ||

|

|

69dc433e1c | ||

|

|

c880cd848c | ||

|

|

5752b6db48 | ||

|

|

b36f905eab | ||

|

|

483dd527c6 | ||

|

|

e55678e28f | ||

|

|

3f4a8b9d6f | ||

|

|

02a856ecb4 | ||

|

|

4dff726310 | ||

|

|

cbc449036f | ||

|

|

8f53152220 | ||

|

|

bbb1e165d6 | ||

|

|

fed8d94885 | ||

|

|

58040cc0ed | ||

|

|

03d692db66 | ||

|

|

903f8e8453 | ||

|

|

405ae1308e | ||

|

|

8a0f583d71 | ||

|

|

b6d7017491 | ||

|

|

0f0217d203 | ||

|

|

a203e33347 | ||

|

|

3b8f697dd4 | ||

|

|

78ba16f722 | ||

|

|

0fcfe79994 | ||

|

|

c0e6df4b63 | ||

|

|

322abdcb43 | ||

|

|

31100787ce | ||

|

|

c57d721be4 | ||

|

|

3b5a03e977 | ||

|

|

ed807ee43e | ||

|

|

073c130ae6 | ||

|

|

8810e0be13 | ||

|

|

f93016ab85 | ||

|

|

b19cf260c2 | ||

|

|

db03e1e7eb | ||

|

|

e0d975e36a | ||

|

|

cfeb15259f | ||

|

|

3b3f8fc8fb | ||

|

|

88bd2c084c | ||

|

|

bd367389b0 | ||

|

|

58ba71a76f | ||

|

|

d03e34d55d | ||

|

|

24f239a46c | ||

|

|

2c0826f85a | ||

|

|

c061461d01 | ||

|

|

e7982a04fe | ||

|

|

33b91a7513 | ||

|

|

9bb1323e44 | ||

|

|

e62bb807a5 | ||

|

|

3fc0d2cc4a | ||

|

|

0c786b0766 | ||

|

|

68c7528911 | ||

|

|

26e18ae800 | ||

|

|

c30dc0b546 | ||

|

|

f94aa46a11 | ||

|

|

403261a293 | ||

|

|

c7d9cbb11f | ||

|

|

57e1c53cbb | ||

|

|

0754b553dd | ||

|

|

50661d941b | ||

|

|

c5db7c1a0c | ||

|

|

2cef5365f7 | ||

|

|

fbc4e94007 | ||

|

|

037ed5a2ad | ||

|

|

69dfa55705 | ||

|

|

a79a5c4e3e | ||

|

|

7e80eabfe6 | ||

|

|

375b72770d | ||

|

|

e2dd683def | ||

|

|

9eba50c6e4 | ||

|

|

5a579dba52 | ||

|

|

e86c719575 | ||

|

|

0e87f35547 | ||

|

|

b6d3d791a5 | ||

|

|

c9c3302664 | ||

|

|

c3e4d65b80 | ||

|

|

27a03510c5 | ||

|

|

ed7727f7cb | ||

|

|

127ec10c0d | ||

|

|

5a9c0ad225 | ||

|

|

7e8daf650e | ||

|

|

0cf737b4ce | ||

|

|

74635e0113 | ||

|

|

e5c4f49901 | ||

|

|

e4654ee7f1 | ||

|

|

e5d05c05ed | ||

|

|

73c4f99687 | ||

|

|

28c12ef3bf | ||

|

|

eed82dbb54 | ||

|

|

2c4b4ab928 | ||

|

|

505a8fc6f6 | ||

|

|

e4801d9b06 | ||

|

|

04f1b2cf3a | ||

|

|

c06d928bb5 | ||

|

|

ab09927e7b | ||

|

|

779437db67 | ||

|

|

28cbdb652e | ||

|

|

2b2415a7d8 | ||

|

|

746a8208aa | ||

|

|

a2a041a98a | ||

|

|

10b436e449 | ||

|

|

4d62b34786 | ||

|

|

0546210687 | ||

|

|

f8c11faada | ||

|

|

16d6e9be1f | ||

|

|

aff8185f2e | ||

|

|

217d15fe81 | ||

|

|

171e93c201 | ||

|

|

acc1d2e9e3 | ||

|

|

49c2f37154 | ||

|

|

69e54497aa | ||

|

|

9aa1885669 | ||

|

|

4418508513 | ||

|

|

e897df3b34 | ||

|

|

8cd97ab0e7 | ||

|

|

bf4949353d | ||

|

|

98a944f7cc | ||

|

|

7c10f81c92 | ||

|

|

126ecc55c3 | ||

|

|

1034a51bd2 | ||

|

|

a2657887cc | ||

|

|

c14b17bfaf | ||

|

|

59ebc795e7 | ||

|

|

8e128d917e | ||

|

|

ea762b05e0 | ||

|

|

db374b19f1 | ||

|

|

ab3839ef36 | ||

|

|

9886c442f2 | ||

|

|

c8d1926d52 | ||

|

|

a6bd699e52 | ||

|

|

12143f2702 | ||

|

|

480705dee9 | ||

|

|

781d5094f4 | ||

|

|

5615cb94cd | ||

|

|

302302a2ac | ||

|

|

9761b4e3e9 | ||

|

|

0cf6924dca | ||

|

|

5fd81e9f90 | ||

|

|

52bf6f892b | ||

|

|

f3cce232a4 | ||

|

|

53d3c8b28e | ||

|

|

83fec3cca7 | ||

|

|

3cefc99b7d | ||

|

|

3a38dcbc05 | ||

|

|

7ff08bce57 | ||

|

|

fd490af434 | ||

|

|

1195b8f17e | ||

|

|

28dce13776 | ||

|

|

431f20177a | ||

|

|

87aff54d9d | ||

|

|

f50462de82 | ||

|

|

9bda8c7eb6 | ||

|

|

e83c63d239 | ||

|

|

b38533b0cc | ||

|

|

5ccca3fbd5 | ||

|

|

9e850fc3ab | ||

|

|

ffbfcd7e00 | ||

|

|

5ea7590748 | ||

|

|

290c3bc2bb | ||

|

|

b12131e91c | ||

|

|

3b354447b0 | ||

|

|

d09ec6feaa | ||

|

|

21405c3fda | ||

|

|

13e5c96cab | ||

|

|

426687b75e | ||

|

|

c8f59fb978 | ||

|

|

871dde79a9 | ||

|

|

e14d81bc6f | ||

|

|

514d046d1f | ||

|

|

4ed9528d36 | ||

|

|

625560e642 | ||

|

|

73ebd917d1 | ||

|

|

cd3e0afad2 | ||

|

|

d8d1f94a86 | ||

|

|

00dfd8cfd1 | ||

|

|

273de6db31 | ||

|

|

c6c0eeb0ff | ||

|

|

e70c74a3b5 | ||

|

|

f7d939eeab | ||

|

|

e815c091b9 | ||

|

|

963529b7cf | ||

|

|

638a52374d | ||

|

|

d9d42b7aa2 | ||

|

|

ec7e5f36a2 | ||

|

|

56110883ea | ||

|

|

7f8d7d6006 | ||

|

|

49e4fb7e12 | ||

|

|

8dbbea473f | ||

|

|

3d375d5114 | ||

|

|

f3eae67d97 | ||

|

|

40c1b19235 | ||

|

|

ccaf0ab159 | ||

|

|

d07f147423 | ||

|

|

f5cb9f92b9 | ||

|

|

f991f74983 | ||

|

|

6b3295059e | ||

|

|

b18a07ae6b | ||

|

|

8ab03dabda | ||

|

|

5e760e35dc | ||

|

|

afbfa04514 | ||

|

|

7aace470c5 | ||

|

|

b4acb24f6a | ||

|

|

bcee8a4934 | ||

|

|

36b0718542 | ||

|

|

9a92bca45d | ||

|

|

b07445a363 | ||

|

|

a62ec0c27e | ||

|

|

57e3a2d382 | ||

|

|

b61022b374 | ||

|

|

a3e2b2ec87 | ||

|

|

a83d3f8801 | ||

|

|

90c5f2b9d2 | ||

|

|

4885653c07 | ||

|

|

21e1cd87ca | ||

|

|

81f82e8e9f | ||

|

|

c0e31851da | ||

|

|

6599c3eced | ||

|

|

5d6c61a861 | ||

|

|

1a5c66edd3 | ||

|

|

deae9fe95a | ||

|

|

abd65c6334 | ||

|

|

8137a99904 | ||

|

|

6f6f9c1f74 | ||

|

|

7b575f716f | ||

|

|

6ba6ea3572 | ||

|

|

9a22ad5ea3 | ||

|

|

beaab9778e | ||

|

|

f327bdb6b4 | ||

|

|

ae180e0f5f | ||

|

|

e3f1d19756 | ||

|

|

93c2bd6ef6 | ||

|

|

4d0e5ff6db | ||

|

|

0893f06919 | ||

|

|

46b6abde3f | ||

|

|

0696610dee | ||

|

|

edf0d3684c | ||

|

|

7af159f5f6 | ||

|

|

7f2cb6764a | ||

|

|

96495a9bf1 | ||

|

|

b2fafec5fc | ||

|

|

0850b8ae2b | ||

|

|

8a68a96c57 | ||

|

|

d3aae8ed6a | ||

|

|

c62ebadda8 | ||

|

|

ffcee6d390 | ||

|

|

de32838346 | ||

|

|

b9a4e47ea2 | ||

|

|

57d994422d | ||

|

|

6ecd745323 | ||

|

|

bd769f5bdb | ||

|

|

2381692aba | ||

|

|

24fdada0a0 | ||

|

|

bb5169710a | ||

|

|

9cde2352f3 | ||

|

|

482dd7a938 | ||

|

|

bddcc69438 | ||

|

|

19d4540630 | ||

|

|

4f5f6c81f5 | ||

|

|

7e4c1238ba | ||

|

|

f7196ac773 | ||

|

|

7a7c832000 | ||

|

|

2b4ccdbebb | ||

|

|

0d16b49489 | ||

|

|

768405b691 | ||

|

|

da01413b7b | ||

|

|

914e22c53e | ||

|

|

43a23bf733 | ||

|

|

92bb00c6d2 | ||

|

|

b0b97a2648 | ||

|

|

2c452fe323 | ||

|

|

ad73d0c77d | ||

|

|

7f9bf1c78c | ||

|

|

61a6bc3a65 | ||

|

|

46e10b0e9f | ||

|

|

8441206e26 | ||

|

|

9fdc5ee748 | ||

|

|

00ff133387 | ||

|

|

96164cb934 | ||

|

|

82fb21ae69 | ||

|

|

89d4a2b4c4 | ||

|

|

fc0c7ff374 | ||

|

|

5148c4f2e9 | ||

|

|

c3b59f7bcf | ||

|

|

61e148202b | ||

|

|

8a4e0739bc | ||

|

|

f75c5f2fe5 | ||

|

|

81d5859588 | ||

|

|

721886bb7a | ||

|

|

b23c272820 | ||

|

|

cd02bfea7a | ||

|

|

6774bd88f9 | ||

|

|

1046a4f376 | ||

|

|

8081f9ddfd | ||

|

|

fa656577d1 | ||

|

|

b14b86990f | ||

|

|

2a6dd7b512 | ||

|

|

feebdee88b | ||

|

|

99d9277f5d | ||

|

|

9af64d6156 | ||

|

|

5e3775c1af | ||

|

|

2d2e8a3da7 | ||

|

|

b2a560b76f | ||

|

|

39397a489d | ||

|

|

ff593a0904 | ||

|

|

f12789cf44 | ||

|

|

4f8cf2fc87 | ||

|

|

fda98730ac | ||

|

|

06c6ddffb6 | ||

|

|

d29f0c066c | ||

|

|

c9e4de3346 | ||

|

|

ca0b97f72d | ||

|

|

b38f20b408 | ||

|

|

05b1dbaf56 | ||

|

|

b8481e32ba | ||

|

|

9c03c65e07 | ||

|

|

d8ed006b9b | ||

|

|

63c0623a5e | ||

|

|

fd84506db0 | ||

|

|

d8bcb44e44 | ||

|

|

56a26b0916 | ||

|

|

efcf1d6b90 | ||

|

|

9f578bfec6 | ||

|

|

1f170d7d28 | ||

|

|

5ae14cf9be | ||

|

|

aaf9d53be9 | ||

|

|

75c73f7ba7 | ||

|

|

b6dba8beee | ||

|

|

94521cdc1a | ||

|

|

3365b1c355 | ||

|

|

6c957c4923 | ||

|

|

833997f04c | ||

|

|

68d51e4037 | ||

|

|

ce274d2011 | ||

|

|

280778ed43 | ||

|

|

0f558ecbbf | ||

|

|

58f9e05d93 | ||

|

|

1ec981aea7 | ||

|

|

2a90286a7c | ||

|

|

12d25d09b2 | ||

|

|

a039fae1a4 | ||

|

|

322b9abadc | ||

|

|

0aaf954cea | ||

|

|

c2d22aa3d1 | ||

|

|

6934c75bba | ||

|

|

c58cf78f86 | ||

|

|

7f0de790ab | ||

|

|

d4bb4e3a73 | ||

|

|

d25612d038 | ||

|

|

116b2351b0 | ||

|

|

69b83dfdc4 | ||

|

|

3b1839c2ce | ||

|

|

13742ebdf8 | ||

|

|

634657bea1 | ||

|

|

46e70d50b7 | ||

|

|

d64e9b85a7 | ||

|

|

fb853edbe3 | ||

|

|

cc076c1be1 | ||

|

|

98cc9a6755 | ||

|

|

7bd2b9c23a | ||

|

|

de724a1ff3 | ||

|

|

2163055dae | ||

|

|

93ed0fc10b | ||

|

|

0d98cefd40 | ||

|

|

d58988a033 | ||

|

|

2acfab1e3f | ||

|

|

b915dfe9a6 | ||

|

|

25bd5a823e | ||

|

|

1c35de4716 | ||

|

|

4c00435a0a | ||

|

|

844e3079a8 | ||

|

|

4778cb5b2c | ||

|

|

ec5d60b919 | ||

|

|

e1f4b960e8 | ||

|

|

669e46da54 | ||

|

|

ba94cc5df7 | ||

|

|

d08245c3df | ||

|

|

5c18d12cbf | ||

|

|

580a42dec7 | ||

|

|

29286e159b | ||

|

|

19bcf90e9f | ||

|

|

dae9c00742 | ||

|

|

35324ceb7c | ||

|

|

5aadd47199 | ||

|

|

7d9057cc62 | ||

|

|

c4b322b883 | ||

|

|

19b09c898a | ||

|

|

eafe2098b6 | ||

|

|

2bc6a20d71 | ||

|

|

8b502a7235 | ||

|

|

37567844af | ||

|

|

2f6c4e0e34 | ||

|

|

1c7cc4cb2b | ||

|

|

f83db3648e | ||

|

|

b164aa00d4 | ||

|

|

a2d866d0c2 | ||

|

|

2dfe4ac4c6 | ||

|

|

db65d05cb5 | ||

|

|

300c0194c7 | ||

|

|

37a0d2b087 | ||

|

|

a4959300ea | ||

|

|

223657e5f8 | ||

|

|

0c53de6767 | ||

|

|

9c309b1498 | ||

|

|

1aa1b34c80 | ||

|

|

755a2ee023 | ||

|

|

69d3359e47 | ||

|

|

a90c49b8fb | ||

|

|

b1222edb27 | ||

|

|

b967a92f69 | ||

|

|

90a5cb5e59 | ||

|

|

7aba9cb76b | ||

|

|

f550a8171d | ||

|

|

82e568d4c9 | ||

|

|

7b2a4a3d59 | ||

|

|

0265455cd1 | ||

|

|

afafc886a4 | ||

|

|

8a959f6ac4 | ||

|

|

1c3aa0d2c5 | ||

|

|

79b7d3316a | ||

|

|

fa7768583a | ||

|

|

faf49f6c15 | ||

|

|

765af31b83 | ||

|

|

b6a3c52d67 | ||

|

|

b025c2f660 | ||

|

|

e559a7c878 | ||

|

|

5c8855aafd | ||

|

|

b5fc537b89 | ||

|

|

14899d3a7c | ||

|

|

0ea7881652 | ||

|

|

ec29b59d1e | ||

|

|

9405597c15 | ||

|

|

82441978c6 | ||

|

|

e0e6291bdb | ||

|

|

b2b083fd0a | ||

|

|

f8a51b68e7 | ||

|

|

e0a19108e5 | ||

|

|

770ea68ca8 | ||

|

|

ce36c52baf | ||

|

|

a7da1dd233 | ||

|

|

678ef296b4 | ||

|

|

9e5627d805 | ||

|

|

5958ee4439 | ||

|

|

7127e57f0e | ||

|

|

ee9c6dc8aa | ||

|

|

92779b3f48 | ||

|

|

2f1baf17d4 | ||

|

|

583da3d4a9 | ||

|

|

bf9ff78bcc | ||

|

|

2cb07792cc | ||

|

|

47bc8bb466 | ||

|

|

94ad1f5732 | ||

|

|

09557fbe83 | ||

|

|

1c0f44fa4e | ||

|

|

fc4d59d2d7 | ||

|

|

12345fbacc | ||

|

|

2e33c8d222 | ||

|

|

db5f07f164 | ||

|

|

e050e69a43 | ||

|

|

27cb1d4fc7 | ||

|

|

5d6a740947 | ||

|

|

da3f68c363 | ||

|

|

d7d1c3685c | ||

|

|

dab3407beb | ||

|

|

592987a54a | ||

|

|

8dca8326f7 | ||

|

|

633481fae3 | ||

|

|

e7b99e6fb7 | ||

|

|

2a6a3aedd0 | ||

|

|

866c74c841 | ||

|

|

dad92bde26 | ||

|

|

a994e034f7 | ||

|

|

2801c04f2e | ||

|

|

316e3abfab | ||

|

|

c15ecb6c8e | ||

|

|

ee96005026 | ||

|

|

5b55d05a20 | ||

|

|

2f09c62c4e | ||

|

|

1cc8b873d4 | ||

|

|

15d5859750 | ||

|

|

a1ecef8020 | ||

|

|

e0a38ceeee | ||

|

|

c4bea13be5 | ||

|

|

5dcefab183 | ||

|

|

28e3178ac5 | ||

|

|

23b021a98b | ||

|

|

0cda38f53d | ||

|

|

6e43ee7cc7 | ||

|

|

da1094db84 | ||

|

|

717d8dc7d9 | ||

|

|

75e68d3427 | ||

|

|

d9c71c11fd | ||

|

|

706f30033e | ||

|

|

04047f3a72 | ||

|

|

060368e93d | ||

|

|

bef2e92cef | ||

|

|

334c07cc0c | ||

|

|

ee284dd282 | ||

|

|

c53126d373 | ||

|

|

00f05941d4 | ||

|

|

1c49b71606 | ||

|

|

fc5c815824 | ||

|

|

836463bab2 | ||

|

|

9e3a560ea6 | ||

|

|

8786416428 | ||

|

|

53f22c25c9 | ||

|

|

c2016ba037 | ||

|

|

5283837e6d | ||

|

|

82f2200f55 | ||

|

|

5cf49928b6 | ||

|

|

eec3efd683 | ||

|

|

bf0aac2cbd | ||

|

|

10652427bc | ||

|

|

a4b0c810a4 |

12

.eslintrc.json

Normal file

12

.eslintrc.json

Normal file

@@ -0,0 +1,12 @@

|

||||

{

|

||||

"env": {

|

||||

"browser": true,

|

||||

"es2021": true

|

||||

},

|

||||

"extends": "eslint:recommended",

|

||||

"parserOptions": {

|

||||

"ecmaVersion": 12

|

||||

},

|

||||

"rules": {

|

||||

}

|

||||

}

|

||||

2

.gitattributes

vendored

2

.gitattributes

vendored

@@ -1,4 +1,6 @@

|

||||

* text eol=lf

|

||||

|

||||

*.reg text eol=crlf

|

||||

|

||||

*.png binary

|

||||

*.gif binary

|

||||

|

||||

14

.gitignore

vendored

14

.gitignore

vendored

@@ -8,17 +8,15 @@ copyparty.egg-info/

|

||||

buildenv/

|

||||

build/

|

||||

dist/

|

||||

*.rst

|

||||

.env/

|

||||

sfx/

|

||||

.venv/

|

||||

|

||||

# sublime

|

||||

# ide

|

||||

*.sublime-workspace

|

||||

|

||||

# winmerge

|

||||

*.bak

|

||||

|

||||

# other licenses

|

||||

contrib/

|

||||

|

||||

# deps

|

||||

copyparty/web/deps

|

||||

# derived

|

||||

copyparty/web/deps/

|

||||

srv/

|

||||

|

||||

30

.vscode/launch.json

vendored

30

.vscode/launch.json

vendored

@@ -9,15 +9,25 @@

|

||||

"console": "integratedTerminal",

|

||||

"cwd": "${workspaceFolder}",

|

||||

"args": [

|

||||

"-j",

|

||||

"0",

|

||||

//"-nw",

|

||||

"-a",

|

||||

"ed:wark",

|

||||

"-v",

|

||||

"/home/ed/inc:inc:r:aed"

|

||||

"-ed",

|

||||

"-emp",

|

||||

"-e2dsa",

|

||||

"-e2ts",

|

||||

"-mtp",

|

||||

".bpm=f,bin/mtag/audio-bpm.py",

|

||||

"-aed:wark",

|

||||

"-vsrv::r:aed:cnodupe",

|

||||

"-vdist:dist:r"

|

||||

]

|

||||

},

|

||||

{

|

||||

"name": "No debug",

|

||||

"preLaunchTask": "no_dbg",

|

||||

"type": "python",

|

||||

//"request": "attach", "port": 42069

|

||||

// fork: nc -l 42069 </dev/null

|

||||

},

|

||||

{

|

||||

"name": "Run active unit test",

|

||||

"type": "python",

|

||||

@@ -30,5 +40,13 @@

|

||||

"${file}"

|

||||

]

|

||||

},

|

||||

{

|

||||

"name": "Python: Current File",

|

||||

"type": "python",

|

||||

"request": "launch",

|

||||

"program": "${file}",

|

||||

"console": "integratedTerminal",

|

||||

"justMyCode": false

|

||||

},

|

||||

]

|

||||

}

|

||||

45

.vscode/launch.py

vendored

Normal file

45

.vscode/launch.py

vendored

Normal file

@@ -0,0 +1,45 @@

|

||||

# takes arguments from launch.json

|

||||

# is used by no_dbg in tasks.json

|

||||

# launches 10x faster than mspython debugpy

|

||||

# and is stoppable with ^C

|

||||

|

||||

import re

|

||||

import os

|

||||

import sys

|

||||

|

||||

print(sys.executable)

|

||||

|

||||

import shlex

|

||||

import jstyleson

|

||||

import subprocess as sp

|

||||

|

||||

|

||||

with open(".vscode/launch.json", "r", encoding="utf-8") as f:

|

||||

tj = f.read()

|

||||

|

||||

oj = jstyleson.loads(tj)

|

||||

argv = oj["configurations"][0]["args"]

|

||||

|

||||

try:

|

||||

sargv = " ".join([shlex.quote(x) for x in argv])

|

||||

print(sys.executable + " -m copyparty " + sargv + "\n")

|

||||

except:

|

||||

pass

|

||||

|

||||

argv = [os.path.expanduser(x) if x.startswith("~") else x for x in argv]

|

||||

|

||||

if re.search(" -j ?[0-9]", " ".join(argv)):

|

||||

argv = [sys.executable, "-m", "copyparty"] + argv

|

||||

sp.check_call(argv)

|

||||

else:

|

||||

sys.path.insert(0, os.getcwd())

|

||||

from copyparty.__main__ import main as copyparty

|

||||

|

||||

try:

|

||||

copyparty(["a"] + argv)

|

||||

except SystemExit as ex:

|

||||

if ex.code:

|

||||

raise

|

||||

|

||||

print("\n\033[32mokke\033[0m")

|

||||

sys.exit(1)

|

||||

14

.vscode/settings.json

vendored

14

.vscode/settings.json

vendored

@@ -37,7 +37,7 @@

|

||||

"python.linting.banditEnabled": true,

|

||||

"python.linting.flake8Args": [

|

||||

"--max-line-length=120",

|

||||

"--ignore=E722,F405,E203,W503,W293",

|

||||

"--ignore=E722,F405,E203,W503,W293,E402",

|

||||

],

|

||||

"python.linting.banditArgs": [

|

||||

"--ignore=B104"

|

||||

@@ -50,11 +50,9 @@

|

||||

"files.associations": {

|

||||

"*.makefile": "makefile"

|

||||

},

|

||||

"editor.codeActionsOnSaveTimeout": 9001,

|

||||

"editor.formatOnSaveTimeout": 9001,

|

||||

//

|

||||

// things you may wanna edit:

|

||||

//

|

||||

"python.pythonPath": ".env/bin/python",

|

||||

//"python.linting.enabled": true,

|

||||

"python.formatting.blackArgs": [

|

||||

"-t",

|

||||

"py27"

|

||||

],

|

||||

"python.linting.enabled": true,

|

||||

}

|

||||

15

.vscode/tasks.json

vendored

Normal file

15

.vscode/tasks.json

vendored

Normal file

@@ -0,0 +1,15 @@

|

||||

{

|

||||

"version": "2.0.0",

|

||||

"tasks": [

|

||||

{

|

||||

"label": "pre",

|

||||

"command": "true;rm -rf inc/* inc/.hist/;mkdir -p inc;",

|

||||

"type": "shell"

|

||||

},

|

||||

{

|

||||

"label": "no_dbg",

|

||||

"type": "shell",

|

||||

"command": "${config:python.pythonPath} .vscode/launch.py"

|

||||

}

|

||||

]

|

||||

}

|

||||

543

README.md

543

README.md

@@ -1,6 +1,6 @@

|

||||

# ⇆🎉 copyparty

|

||||

|

||||

* http file sharing hub (py2/py3)

|

||||

* http file sharing hub (py2/py3) [(on PyPI)](https://pypi.org/project/copyparty/)

|

||||

* MIT-Licensed, 2019-05-26, ed @ irc.rizon.net

|

||||

|

||||

|

||||

@@ -8,61 +8,527 @@

|

||||

|

||||

turn your phone or raspi into a portable file server with resumable uploads/downloads using IE6 or any other browser

|

||||

|

||||

* server runs on anything with `py2.7` or `py3.2+`

|

||||

* *resumable* uploads need `firefox 12+` / `chrome 6+` / `safari 6+` / `IE 10+`

|

||||

* server runs on anything with `py2.7` or `py3.3+`

|

||||

* browse/upload with IE4 / netscape4.0 on win3.11 (heh)

|

||||

* *resumable* uploads need `firefox 34+` / `chrome 41+` / `safari 7+` for full speed

|

||||

* code standard: `black`

|

||||

|

||||

📷 **screenshots:** [browser](#the-browser) // [upload](#uploading) // [thumbnails](#thumbnails) // [md-viewer](#markdown-viewer) // [search](#searching) // [fsearch](#file-search) // [zip-DL](#zip-downloads) // [ie4](#browser-support)

|

||||

|

||||

|

||||

## readme toc

|

||||

|

||||

* top

|

||||

* [quickstart](#quickstart)

|

||||

* [notes](#notes)

|

||||

* [status](#status)

|

||||

* [bugs](#bugs)

|

||||

* [general bugs](#general-bugs)

|

||||

* [not my bugs](#not-my-bugs)

|

||||

* [the browser](#the-browser)

|

||||

* [tabs](#tabs)

|

||||

* [hotkeys](#hotkeys)

|

||||

* [tree-mode](#tree-mode)

|

||||

* [thumbnails](#thumbnails)

|

||||

* [zip downloads](#zip-downloads)

|

||||

* [uploading](#uploading)

|

||||

* [file-search](#file-search)

|

||||

* [markdown viewer](#markdown-viewer)

|

||||

* [other tricks](#other-tricks)

|

||||

* [searching](#searching)

|

||||

* [search configuration](#search-configuration)

|

||||

* [database location](#database-location)

|

||||

* [metadata from audio files](#metadata-from-audio-files)

|

||||

* [file parser plugins](#file-parser-plugins)

|

||||

* [complete examples](#complete-examples)

|

||||

* [browser support](#browser-support)

|

||||

* [client examples](#client-examples)

|

||||

* [up2k](#up2k)

|

||||

* [dependencies](#dependencies)

|

||||

* [optional dependencies](#optional-dependencies)

|

||||

* [install recommended deps](#install-recommended-deps)

|

||||

* [optional gpl stuff](#optional-gpl-stuff)

|

||||

* [sfx](#sfx)

|

||||

* [sfx repack](#sfx-repack)

|

||||

* [install on android](#install-on-android)

|

||||

* [dev env setup](#dev-env-setup)

|

||||

* [how to release](#how-to-release)

|

||||

* [todo](#todo)

|

||||

|

||||

|

||||

## quickstart

|

||||

|

||||

download [copyparty-sfx.py](https://github.com/9001/copyparty/releases/latest/download/copyparty-sfx.py) and you're all set!

|

||||

|

||||

running the sfx without arguments (for example doubleclicking it on Windows) will give everyone full access to the current folder; see `-h` for help if you want accounts and volumes etc

|

||||

|

||||

you may also want these, especially on servers:

|

||||

* [contrib/systemd/copyparty.service](contrib/systemd/copyparty.service) to run copyparty as a systemd service

|

||||

* [contrib/nginx/copyparty.conf](contrib/nginx/copyparty.conf) to reverse-proxy behind nginx (for better https)

|

||||

|

||||

|

||||

## notes

|

||||

|

||||

general:

|

||||

* paper-printing is affected by dark/light-mode! use lightmode for color, darkmode for grayscale

|

||||

* because no browsers currently implement the media-query to do this properly orz

|

||||

|

||||

browser-specific:

|

||||

* iPhone/iPad: use Firefox to download files

|

||||

* Android-Chrome: set max "parallel uploads" for 200% upload speed (android bug)

|

||||

* Android-Firefox: takes a while to select files (in order to avoid the above android-chrome issue)

|

||||

* Desktop-Firefox: may use gigabytes of RAM if your connection is great and your files are massive

|

||||

* Android-Chrome: increase "parallel uploads" for higher speed (android bug)

|

||||

* Android-Firefox: takes a while to select files (their fix for ☝️)

|

||||

* Desktop-Firefox: ~~may use gigabytes of RAM if your files are massive~~ *seems to be OK now*

|

||||

* Desktop-Firefox: may stop you from deleting folders you've uploaded until you visit `about:memory` and click `Minimize memory usage`

|

||||

|

||||

|

||||

## status

|

||||

|

||||

* [x] sanic multipart parser

|

||||

* [x] load balancer (multiprocessing)

|

||||

* [x] upload (plain multipart, ie6 support)

|

||||

* [x] upload (js, resumable, multithreaded)

|

||||

* [x] download

|

||||

* [x] browser

|

||||

* [x] media player

|

||||

* [ ] thumbnails

|

||||

* [ ] download as zip

|

||||

* [x] volumes

|

||||

* [x] accounts

|

||||

summary: all planned features work! now please enjoy the bloatening

|

||||

|

||||

summary: close to beta

|

||||

* backend stuff

|

||||

* ☑ sanic multipart parser

|

||||

* ☑ load balancer (multiprocessing)

|

||||

* ☑ volumes (mountpoints)

|

||||

* ☑ accounts

|

||||

* upload

|

||||

* ☑ basic: plain multipart, ie6 support

|

||||

* ☑ up2k: js, resumable, multithreaded

|

||||

* ☑ stash: simple PUT filedropper

|

||||

* ☑ symlink/discard existing files (content-matching)

|

||||

* download

|

||||

* ☑ single files in browser

|

||||

* ☑ folders as zip / tar files

|

||||

* ☑ FUSE client (read-only)

|

||||

* browser

|

||||

* ☑ tree-view

|

||||

* ☑ media player

|

||||

* ☑ thumbnails

|

||||

* ☑ images using Pillow

|

||||

* ☑ videos using FFmpeg

|

||||

* ☑ cache eviction (max-age; maybe max-size eventually)

|

||||

* ☑ image gallery

|

||||

* ☑ SPA (browse while uploading)

|

||||

* if you use the file-tree on the left only, not folders in the file list

|

||||

* server indexing

|

||||

* ☑ locate files by contents

|

||||

* ☑ search by name/path/date/size

|

||||

* ☑ search by ID3-tags etc.

|

||||

* markdown

|

||||

* ☑ viewer

|

||||

* ☑ editor (sure why not)

|

||||

|

||||

|

||||

# bugs

|

||||

|

||||

* Windows: python 3.7 and older cannot read tags with ffprobe, so use mutagen or upgrade

|

||||

* Windows: python 2.7 cannot index non-ascii filenames with `-e2d`

|

||||

* Windows: python 2.7 cannot handle filenames with mojibake

|

||||

* MacOS: `--th-ff-jpg` may fix thumbnails using macports-FFmpeg

|

||||

|

||||

## general bugs

|

||||

|

||||

* all volumes must exist / be available on startup; up2k (mtp especially) gets funky otherwise

|

||||

* cannot mount something at `/d1/d2/d3` unless `d2` exists inside `d1`

|

||||

* probably more, pls let me know

|

||||

|

||||

## not my bugs

|

||||

|

||||

* Windows: msys2-python 3.8.6 occasionally throws "RuntimeError: release unlocked lock" when leaving a scoped mutex in up2k

|

||||

* this is an msys2 bug, the regular windows edition of python is fine

|

||||

|

||||

|

||||

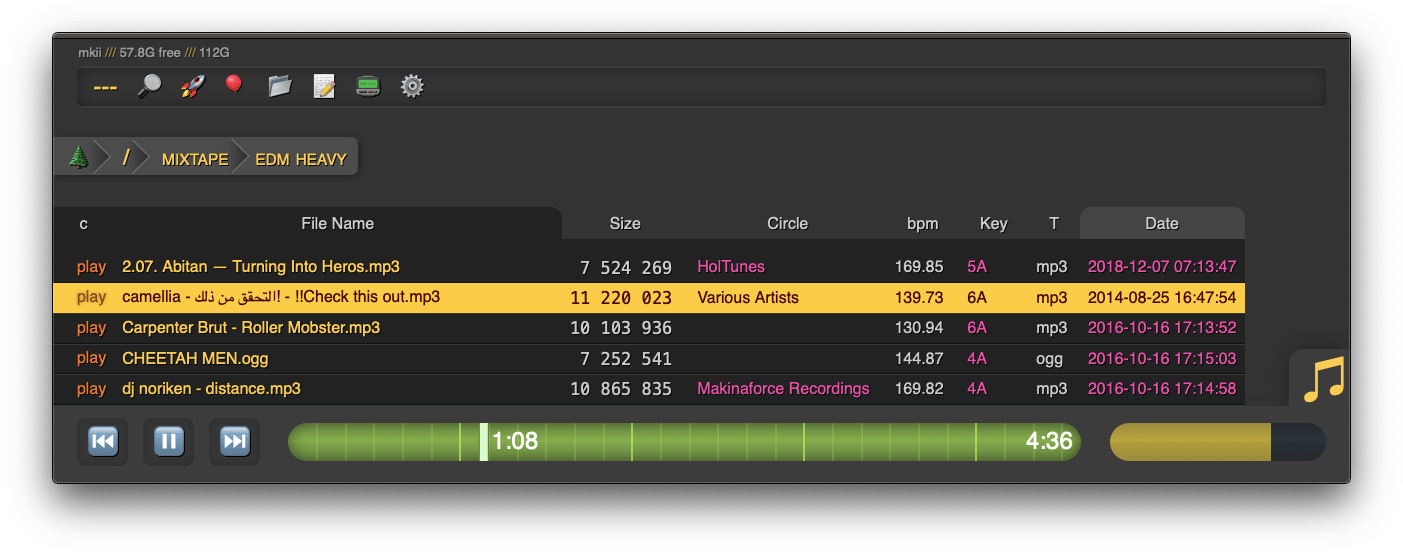

# the browser

|

||||

|

||||

|

||||

|

||||

|

||||

## tabs

|

||||

|

||||

* `[🔎]` search by size, date, path/name, mp3-tags ... see [searching](#searching)

|

||||

* `[🚀]` and `[🎈]` are the uploaders, see [uploading](#uploading)

|

||||

* `[📂]` mkdir, create directories

|

||||

* `[📝]` new-md, create a new markdown document

|

||||

* `[📟]` send-msg, either to server-log or into textfiles if `--urlform save`

|

||||

* `[⚙️]` client configuration options

|

||||

|

||||

|

||||

## hotkeys

|

||||

|

||||

the browser has the following hotkeys

|

||||

* `I/K` prev/next folder

|

||||

* `P` parent folder

|

||||

* `G` toggle list / grid view

|

||||

* `T` toggle thumbnails / icons

|

||||

* when playing audio:

|

||||

* `0..9` jump to 10%..90%

|

||||

* `U/O` skip 10sec back/forward

|

||||

* `J/L` prev/next song

|

||||

* `J` also starts playing the folder

|

||||

* in the grid view:

|

||||

* `S` toggle multiselect

|

||||

* `A/D` zoom

|

||||

|

||||

|

||||

## tree-mode

|

||||

|

||||

by default there's a breadcrumbs path; you can replace this with a tree-browser sidebar thing by clicking the 🌲

|

||||

|

||||

click `[-]` and `[+]` to adjust the size, and the `[a]` toggles if the tree should widen dynamically as you go deeper or stay fixed-size

|

||||

|

||||

|

||||

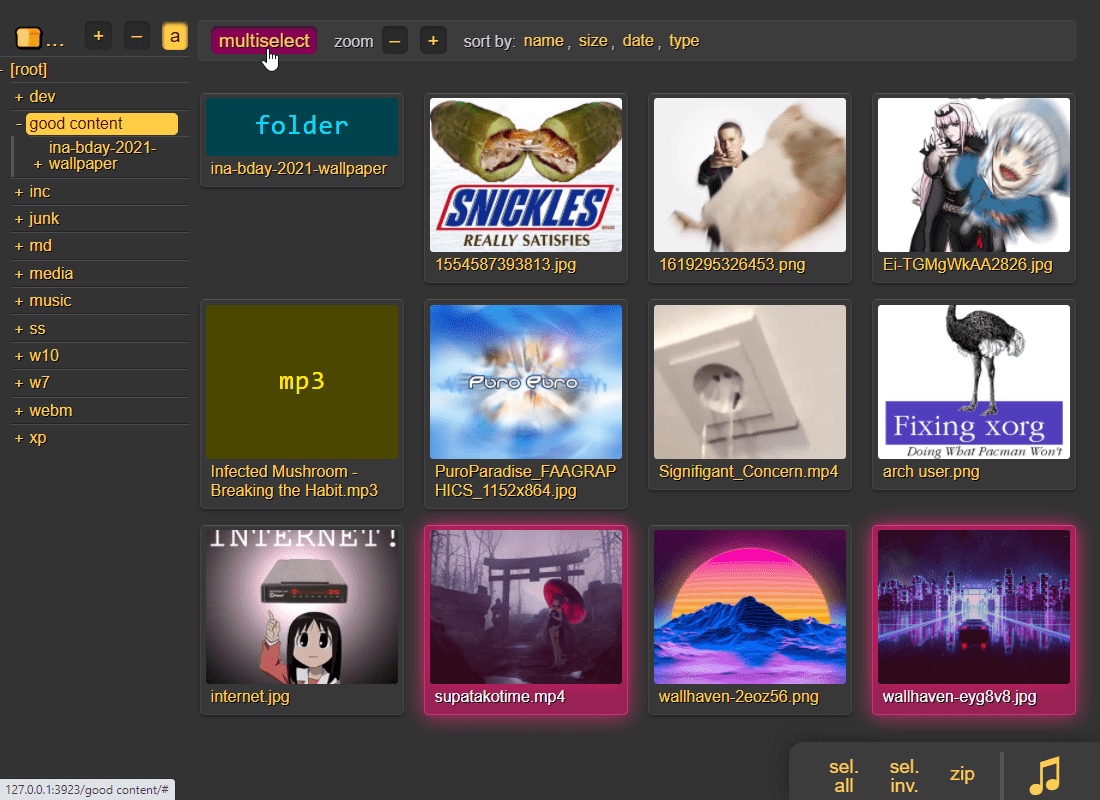

## thumbnails

|

||||

|

||||

|

||||

|

||||

it does static images with Pillow and uses FFmpeg for video files, so you may want to `--no-thumb` or maybe just `--no-vthumb` depending on how destructive your users are

|

||||

|

||||

images named `folder.jpg` and `folder.png` become the thumbnail of the folder they're in

|

||||

|

||||

|

||||

## zip downloads

|

||||

|

||||

the `zip` link next to folders can produce various types of zip/tar files using these alternatives in the browser settings tab:

|

||||

|

||||

| name | url-suffix | description |

|

||||

|--|--|--|

|

||||

| `tar` | `?tar` | plain gnutar, works great with `curl \| tar -xv` |

|

||||

| `zip` | `?zip=utf8` | works everywhere, glitchy filenames on win7 and older |

|

||||

| `zip_dos` | `?zip` | traditional cp437 (no unicode) to fix glitchy filenames |

|

||||

| `zip_crc` | `?zip=crc` | cp437 with crc32 computed early for truly ancient software |

|

||||

|

||||

* hidden files (dotfiles) are excluded unless `-ed`

|

||||

* the up2k.db is always excluded

|

||||

* `zip_crc` will take longer to download since the server has to read each file twice

|

||||

* please let me know if you find a program old enough to actually need this

|

||||

|

||||

you can also zip a selection of files or folders by clicking them in the browser, that brings up a selection editor and zip button in the bottom right

|

||||

|

||||

|

||||

|

||||

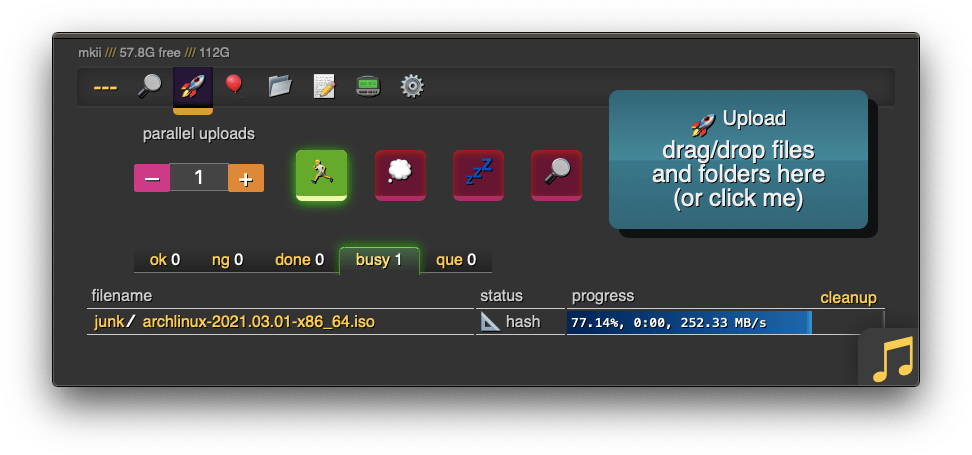

## uploading

|

||||

|

||||

two upload methods are available in the html client:

|

||||

* `🎈 bup`, the basic uploader, supports almost every browser since netscape 4.0

|

||||

* `🚀 up2k`, the fancy one

|

||||

|

||||

up2k has several advantages:

|

||||

* you can drop folders into the browser (files are added recursively)

|

||||

* files are processed in chunks, and each chunk is checksummed

|

||||

* uploads resume if they are interrupted (for example by a reboot)

|

||||

* server detects any corruption; the client reuploads affected chunks

|

||||

* the client doesn't upload anything that already exists on the server

|

||||

* the last-modified timestamp of the file is preserved

|

||||

|

||||

see [up2k](#up2k) for details on how it works

|

||||

|

||||

|

||||

|

||||

**protip:** you can avoid scaring away users with [docs/minimal-up2k.html](docs/minimal-up2k.html) which makes it look [much simpler](https://user-images.githubusercontent.com/241032/118311195-dd6ca380-b4ef-11eb-86f3-75a3ff2e1332.png)

|

||||

|

||||

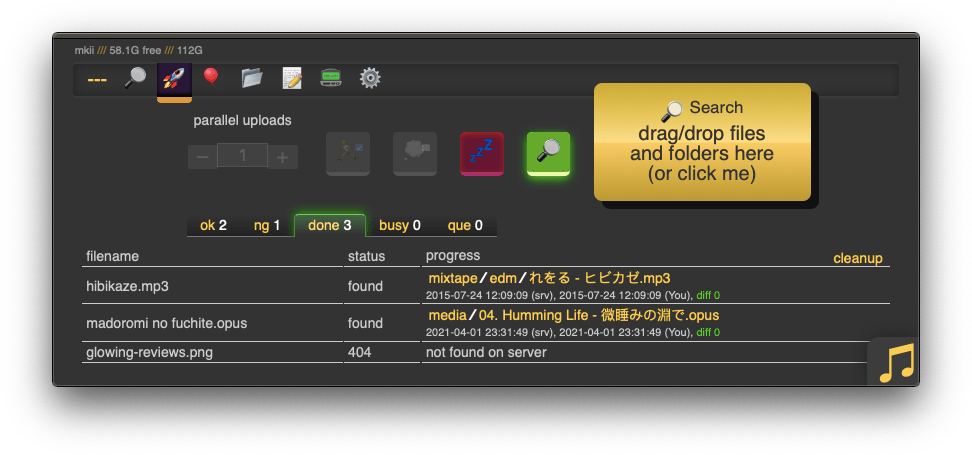

the up2k UI is the epitome of polished inutitive experiences:

|

||||

* "parallel uploads" specifies how many chunks to upload at the same time

|

||||

* `[🏃]` analysis of other files should continue while one is uploading

|

||||

* `[💭]` ask for confirmation before files are added to the list

|

||||

* `[💤]` sync uploading between other copyparty tabs so only one is active

|

||||

* `[🔎]` switch between upload and file-search mode

|

||||

|

||||

and then theres the tabs below it,

|

||||

* `[ok]` is uploads which completed successfully

|

||||

* `[ng]` is the uploads which failed / got rejected (already exists, ...)

|

||||

* `[done]` shows a combined list of `[ok]` and `[ng]`, chronological order

|

||||

* `[busy]` files which are currently hashing, pending-upload, or uploading

|

||||

* plus up to 3 entries each from `[done]` and `[que]` for context

|

||||

* `[que]` is all the files that are still queued

|

||||

|

||||

### file-search

|

||||

|

||||

|

||||

|

||||

in the `[🚀 up2k]` tab, after toggling the `[🔎]` switch green, any files/folders you drop onto the dropzone will be hashed on the client-side. Each hash is sent to the server which checks if that file exists somewhere already

|

||||

|

||||

files go into `[ok]` if they exist (and you get a link to where it is), otherwise they land in `[ng]`

|

||||

* the main reason filesearch is combined with the uploader is cause the code was too spaghetti to separate it out somewhere else, this is no longer the case but now i've warmed up to the idea too much

|

||||

|

||||

adding the same file multiple times is blocked, so if you first search for a file and then decide to upload it, you have to click the `[cleanup]` button to discard `[done]` files

|

||||

|

||||

note that since up2k has to read the file twice, `[🎈 bup]` can be up to 2x faster in extreme cases (if your internet connection is faster than the read-speed of your HDD)

|

||||

|

||||

up2k has saved a few uploads from becoming corrupted in-transfer already; caught an android phone on wifi redhanded in wireshark with a bitflip, however bup with https would *probably* have noticed as well thanks to tls also functioning as an integrity check

|

||||

|

||||

|

||||

## markdown viewer

|

||||

|

||||

|

||||

|

||||

* the document preview has a max-width which is the same as an A4 paper when printed

|

||||

|

||||

|

||||

## other tricks

|

||||

|

||||

* you can link a particular timestamp in an audio file by adding it to the URL, such as `&20` / `&20s` / `&1m20` / `&t=1:20` after the `.../#af-c8960dab`

|

||||

|

||||

|

||||

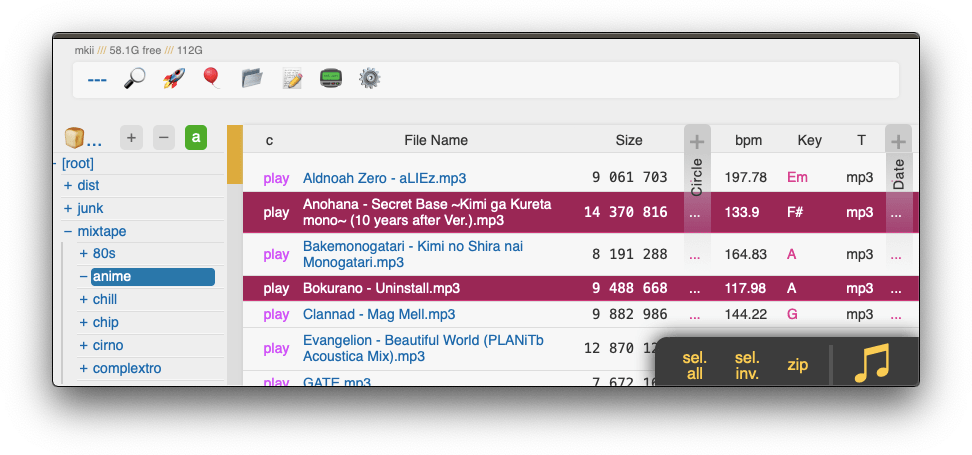

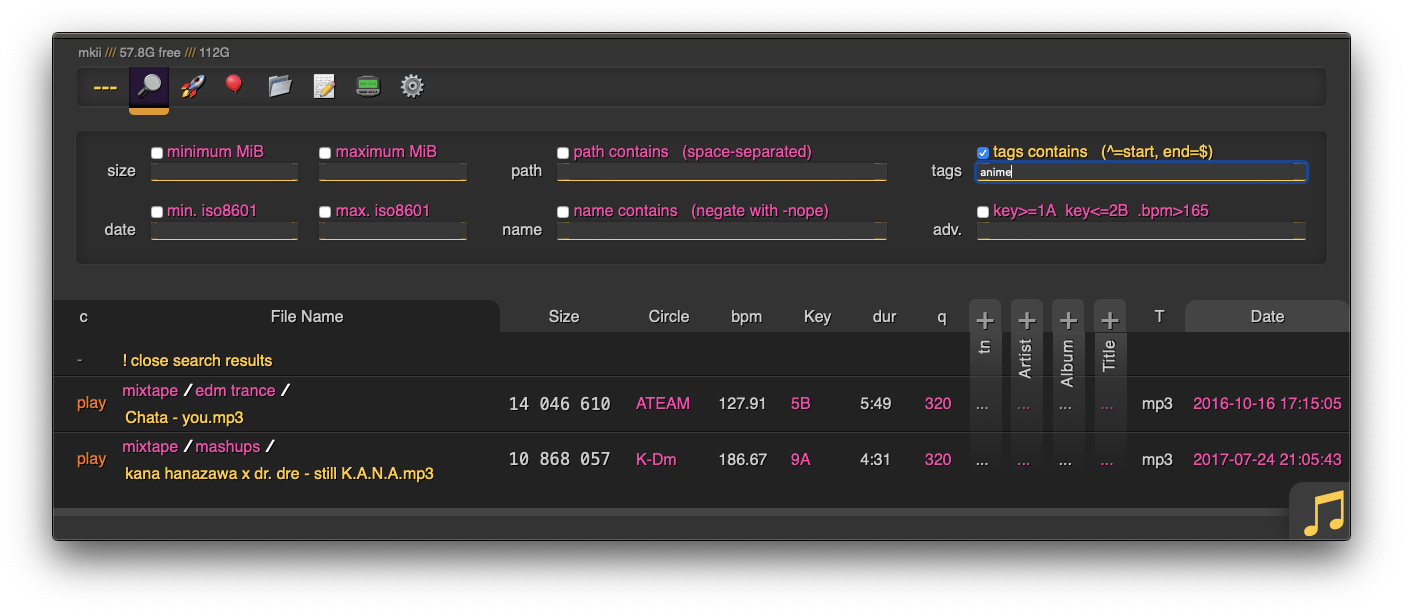

# searching

|

||||

|

||||

|

||||

|

||||

when started with `-e2dsa` copyparty will scan/index all your files. This avoids duplicates on upload, and also makes the volumes searchable through the web-ui:

|

||||

* make search queries by `size`/`date`/`directory-path`/`filename`, or...

|

||||

* drag/drop a local file to see if the same contents exist somewhere on the server, see [file-search](#file-search)

|

||||

|

||||

path/name queries are space-separated, AND'ed together, and words are negated with a `-` prefix, so for example:

|

||||

* path: `shibayan -bossa` finds all files where one of the folders contain `shibayan` but filters out any results where `bossa` exists somewhere in the path

|

||||

* name: `demetori styx` gives you [good stuff](https://www.youtube.com/watch?v=zGh0g14ZJ8I&list=PL3A147BD151EE5218&index=9)

|

||||

|

||||

add `-e2ts` to also scan/index tags from music files:

|

||||

|

||||

|

||||

## search configuration

|

||||

|

||||

searching relies on two databases, the up2k filetree (`-e2d`) and the metadata tags (`-e2t`). Configuration can be done through arguments, volume flags, or a mix of both.

|

||||

|

||||

through arguments:

|

||||

* `-e2d` enables file indexing on upload

|

||||

* `-e2ds` scans writable folders on startup

|

||||

* `-e2dsa` scans all mounted volumes (including readonly ones)

|

||||

* `-e2t` enables metadata indexing on upload

|

||||

* `-e2ts` scans for tags in all files that don't have tags yet

|

||||

* `-e2tsr` deletes all existing tags, so a full reindex

|

||||

|

||||

the same arguments can be set as volume flags, in addition to `d2d` and `d2t` for disabling:

|

||||

* `-v ~/music::r:ce2dsa:ce2tsr` does a full reindex of everything on startup

|

||||

* `-v ~/music::r:cd2d` disables **all** indexing, even if any `-e2*` are on

|

||||

* `-v ~/music::r:cd2t` disables all `-e2t*` (tags), does not affect `-e2d*`

|

||||

|

||||

note:

|

||||

* `e2tsr` is probably always overkill, since `e2ds`/`e2dsa` would pick up any file modifications and cause `e2ts` to reindex those

|

||||

* the rescan button in the admin panel has no effect unless the volume has `-e2ds` or higher

|

||||

|

||||

you can choose to only index filename/path/size/last-modified (and not the hash of the file contents) by setting `--no-hash` or the volume-flag `cdhash`, this has the following consequences:

|

||||

* initial indexing is way faster, especially when the volume is on a networked disk

|

||||

* makes it impossible to [file-search](#file-search)

|

||||

* if someone uploads the same file contents, the upload will not be detected as a dupe, so it will not get symlinked or rejected

|

||||

|

||||

if you set `--no-hash`, you can enable hashing for specific volumes using flag `cehash`

|

||||

|

||||

|

||||

## database location

|

||||

|

||||

copyparty creates a subfolder named `.hist` inside each volume where it stores the database, thumbnails, and some other stuff

|

||||

|

||||

this can instead be kept in a single place using the `--hist` argument, or the `hist=` volume flag, or a mix of both:

|

||||

* `--hist ~/.cache/copyparty -v ~/music::r:chist=-` sets `~/.cache/copyparty` as the default place to put volume info, but `~/music` gets the regular `.hist` subfolder (`-` restores default behavior)

|

||||

|

||||

note:

|

||||

* markdown edits are always stored in a local `.hist` subdirectory

|

||||

* on windows the volflag path is cyglike, so `/c/temp` means `C:\temp` but use regular paths for `--hist`

|

||||

* you can use cygpaths for volumes too, `-v C:\Users::r` and `-v /c/users::r` both work

|

||||

|

||||

|

||||

## metadata from audio files

|

||||

|

||||

`-mte` decides which tags to index and display in the browser (and also the display order), this can be changed per-volume:

|

||||

* `-v ~/music::r:cmte=title,artist` indexes and displays *title* followed by *artist*

|

||||

|

||||

if you add/remove a tag from `mte` you will need to run with `-e2tsr` once to rebuild the database, otherwise only new files will be affected

|

||||

|

||||

`-mtm` can be used to add or redefine a metadata mapping, say you have media files with `foo` and `bar` tags and you want them to display as `qux` in the browser (preferring `foo` if both are present), then do `-mtm qux=foo,bar` and now you can `-mte artist,title,qux`

|

||||

|

||||

tags that start with a `.` such as `.bpm` and `.dur`(ation) indicate numeric value

|

||||

|

||||

see the beautiful mess of a dictionary in [mtag.py](https://github.com/9001/copyparty/blob/master/copyparty/mtag.py) for the default mappings (should cover mp3,opus,flac,m4a,wav,aif,)

|

||||

|

||||

`--no-mutagen` disables mutagen and uses ffprobe instead, which...

|

||||

* is about 20x slower than mutagen

|

||||

* catches a few tags that mutagen doesn't

|

||||

* melodic key, video resolution, framerate, pixfmt

|

||||

* avoids pulling any GPL code into copyparty

|

||||

* more importantly runs ffprobe on incoming files which is bad if your ffmpeg has a cve

|

||||

|

||||

|

||||

## file parser plugins

|

||||

|

||||

copyparty can invoke external programs to collect additional metadata for files using `mtp` (as argument or volume flag), there is a default timeout of 30sec

|

||||

|

||||

* `-mtp .bpm=~/bin/audio-bpm.py` will execute `~/bin/audio-bpm.py` with the audio file as argument 1 to provide the `.bpm` tag, if that does not exist in the audio metadata

|

||||

* `-mtp key=f,t5,~/bin/audio-key.py` uses `~/bin/audio-key.py` to get the `key` tag, replacing any existing metadata tag (`f,`), aborting if it takes longer than 5sec (`t5,`)

|

||||

* `-v ~/music::r:cmtp=.bpm=~/bin/audio-bpm.py:cmtp=key=f,t5,~/bin/audio-key.py` both as a per-volume config wow this is getting ugly

|

||||

|

||||

*but wait, there's more!* `-mtp` can be used for non-audio files as well using the `a` flag: `ay` only do audio files, `an` only do non-audio files, or `ad` do all files (d as in dontcare)

|

||||

|

||||

* `-mtp ext=an,~/bin/file-ext.py` runs `~/bin/file-ext.py` to get the `ext` tag only if file is not audio (`an`)

|

||||

* `-mtp arch,built,ver,orig=an,eexe,edll,~/bin/exe.py` runs `~/bin/exe.py` to get properties about windows-binaries only if file is not audio (`an`) and file extension is exe or dll

|

||||

|

||||

|

||||

## complete examples

|

||||

|

||||

* read-only music server with bpm and key scanning

|

||||

`python copyparty-sfx.py -v /mnt/nas/music:/music:r -e2dsa -e2ts -mtp .bpm=f,audio-bpm.py -mtp key=f,audio-key.py`

|

||||

|

||||

|

||||

# browser support

|

||||

|

||||

|

||||

|

||||

`ie` = internet-explorer, `ff` = firefox, `c` = chrome, `iOS` = iPhone/iPad, `Andr` = Android

|

||||

|

||||

| feature | ie6 | ie9 | ie10 | ie11 | ff 52 | c 49 | iOS | Andr |

|

||||

| --------------- | --- | --- | ---- | ---- | ----- | ---- | --- | ---- |

|

||||

| browse files | yep | yep | yep | yep | yep | yep | yep | yep |

|

||||

| basic uploader | yep | yep | yep | yep | yep | yep | yep | yep |

|

||||

| make directory | yep | yep | yep | yep | yep | yep | yep | yep |

|

||||

| send message | yep | yep | yep | yep | yep | yep | yep | yep |

|

||||

| set sort order | - | yep | yep | yep | yep | yep | yep | yep |

|

||||

| zip selection | - | yep | yep | yep | yep | yep | yep | yep |

|

||||

| directory tree | - | - | `*1` | yep | yep | yep | yep | yep |

|

||||

| up2k | - | - | yep | yep | yep | yep | yep | yep |

|

||||

| icons work | - | - | yep | yep | yep | yep | yep | yep |

|

||||

| markdown editor | - | - | yep | yep | yep | yep | yep | yep |

|

||||

| markdown viewer | - | - | yep | yep | yep | yep | yep | yep |

|

||||

| play mp3/m4a | - | yep | yep | yep | yep | yep | yep | yep |

|

||||

| play ogg/opus | - | - | - | - | yep | yep | `*2` | yep |

|

||||

|

||||

* internet explorer 6 to 8 behave the same

|

||||

* firefox 52 and chrome 49 are the last winxp versions

|

||||

* `*1` only public folders (login session is dropped) and no history / back-button

|

||||

* `*2` using a wasm decoder which can sometimes get stuck and consumes a bit more power

|

||||

|

||||

quick summary of more eccentric web-browsers trying to view a directory index:

|

||||

|

||||

| browser | will it blend |

|

||||

| ------- | ------------- |

|

||||

| **safari** (14.0.3/macos) | is chrome with janky wasm, so playing opus can deadlock the javascript engine |

|

||||

| **safari** (14.0.1/iOS) | same as macos, except it recovers from the deadlocks if you poke it a bit |

|

||||

| **links** (2.21/macports) | can browse, login, upload/mkdir/msg |

|

||||

| **lynx** (2.8.9/macports) | can browse, login, upload/mkdir/msg |

|

||||

| **w3m** (0.5.3/macports) | can browse, login, upload at 100kB/s, mkdir/msg |

|

||||

| **netsurf** (3.10/arch) | is basically ie6 with much better css (javascript has almost no effect) |

|

||||

| **ie4** and **netscape** 4.0 | can browse (text is yellow on white), upload with `?b=u` |

|

||||

| **SerenityOS** (22d13d8) | hits a page fault, works with `?b=u`, file input not-impl, url params are multiplying |

|

||||

|

||||

|

||||

# client examples

|

||||

|

||||

* javascript: dump some state into a file (two separate examples)

|

||||

* `await fetch('https://127.0.0.1:3923/', {method:"PUT", body: JSON.stringify(foo)});`

|

||||

* `var xhr = new XMLHttpRequest(); xhr.open('POST', 'https://127.0.0.1:3923/msgs?raw'); xhr.send('foo');`

|

||||

|

||||

* curl/wget: upload some files (post=file, chunk=stdin)

|

||||

* `post(){ curl -b cppwd=wark http://127.0.0.1:3923/ -F act=bput -F f=@"$1";}`

|

||||

`post movie.mkv`

|

||||

* `post(){ wget --header='Cookie: cppwd=wark' http://127.0.0.1:3923/?raw --post-file="$1" -O-;}`

|

||||

`post movie.mkv`

|

||||

* `chunk(){ curl -b cppwd=wark http://127.0.0.1:3923/ -T-;}`

|

||||

`chunk <movie.mkv`

|

||||

|

||||

* FUSE: mount a copyparty server as a local filesystem

|

||||

* cross-platform python client available in [./bin/](bin/)

|

||||

* [rclone](https://rclone.org/) as client can give ~5x performance, see [./docs/rclone.md](docs/rclone.md)

|

||||

|

||||

* sharex (screenshot utility): see [./contrib/sharex.sxcu](contrib/#sharexsxcu)

|

||||

|

||||

copyparty returns a truncated sha512sum of your PUT/POST as base64; you can generate the same checksum locally to verify uplaods:

|

||||

|

||||

b512(){ printf "$((sha512sum||shasum -a512)|sed -E 's/ .*//;s/(..)/\\x\1/g')"|base64|head -c43;}

|

||||

b512 <movie.mkv

|

||||

|

||||

|

||||

# up2k

|

||||

|

||||

quick outline of the up2k protocol, see [uploading](#uploading) for the web-client

|

||||

* the up2k client splits a file into an "optimal" number of chunks

|

||||

* 1 MiB each, unless that becomes more than 256 chunks

|

||||

* tries 1.5M, 2M, 3, 4, 6, ... until <= 256 chunks or size >= 32M

|

||||

* client posts the list of hashes, filename, size, last-modified

|

||||

* server creates the `wark`, an identifier for this upload

|

||||

* `sha512( salt + filesize + chunk_hashes )`

|

||||

* and a sparse file is created for the chunks to drop into

|

||||

* client uploads each chunk

|

||||

* header entries for the chunk-hash and wark

|

||||

* server writes chunks into place based on the hash

|

||||

* client does another handshake with the hashlist; server replies with OK or a list of chunks to reupload

|

||||

|

||||

|

||||

# dependencies

|

||||

|

||||

* `jinja2`

|

||||

* pulls in `markupsafe` as of v2.7; use jinja 2.6 on py3.2

|

||||

* `jinja2` (is built into the SFX)

|

||||

|

||||

optional, enables thumbnails:

|

||||

|

||||

## optional dependencies

|

||||

|

||||

enable music tags:

|

||||

* either `mutagen` (fast, pure-python, skips a few tags, makes copyparty GPL? idk)

|

||||

* or `FFprobe` (20x slower, more accurate, possibly dangerous depending on your distro and users)

|

||||

|

||||

enable image thumbnails:

|

||||

* `Pillow` (requires py2.7 or py3.5+)

|

||||

|

||||

enable video thumbnails:

|

||||

* `ffmpeg` and `ffprobe` somewhere in `$PATH`

|

||||

|

||||

enable reading HEIF pictures:

|

||||

* `pyheif-pillow-opener` (requires Linux or a C compiler)

|

||||

|

||||

enable reading AVIF pictures:

|

||||

* `pillow-avif-plugin`

|

||||

|

||||

|

||||

## install recommended deps

|

||||

```

|

||||

python -m pip install --user -U jinja2 mutagen Pillow

|

||||

```

|

||||

|

||||

|

||||

## optional gpl stuff

|

||||

|

||||

some bundled tools have copyleft dependencies, see [./bin/#mtag](bin/#mtag)

|

||||

|

||||

these are standalone programs and will never be imported / evaluated by copyparty

|

||||

|

||||

|

||||

# sfx

|

||||

|

||||

currently there are two self-contained "binaries":

|

||||

* [copyparty-sfx.py](https://github.com/9001/copyparty/releases/latest/download/copyparty-sfx.py) -- pure python, works everywhere, **recommended**

|

||||

* [copyparty-sfx.sh](https://github.com/9001/copyparty/releases/latest/download/copyparty-sfx.sh) -- smaller, but only for linux and macos, kinda deprecated

|

||||

|

||||

launch either of them (**use sfx.py on systemd**) and it'll unpack and run copyparty, assuming you have python installed of course

|

||||

|

||||

pls note that `copyparty-sfx.sh` will fail if you rename `copyparty-sfx.py` to `copyparty.py` and keep it in the same folder because `sys.path` is funky

|

||||

|

||||

|

||||

## sfx repack

|

||||

|

||||

if you don't need all the features you can repack the sfx and save a bunch of space; all you need is an sfx and a copy of this repo (nothing else to download or build, except for either msys2 or WSL if you're on windows)

|

||||

* `724K` original size as of v0.4.0

|

||||

* `256K` after `./scripts/make-sfx.sh re no-ogv`

|

||||

* `164K` after `./scripts/make-sfx.sh re no-ogv no-cm`

|

||||

|

||||

the features you can opt to drop are

|

||||

* `ogv`.js, the opus/vorbis decoder which is needed by apple devices to play foss audio files

|

||||

* `cm`/easymde, the "fancy" markdown editor

|

||||

|

||||

for the `re`pack to work, first run one of the sfx'es once to unpack it

|

||||

|

||||

**note:** you can also just download and run [scripts/copyparty-repack.sh](scripts/copyparty-repack.sh) -- this will grab the latest copyparty release from github and do a `no-ogv no-cm` repack; works on linux/macos (and windows with msys2 or WSL)

|

||||

|

||||

|

||||

# install on android

|

||||

|

||||

install [Termux](https://termux.com/) (see [ocv.me/termux](https://ocv.me/termux/)) and then copy-paste this into Termux (long-tap) all at once:

|

||||

```sh

|

||||

apt update && apt -y full-upgrade && termux-setup-storage && apt -y install curl && cd && curl -L https://github.com/9001/copyparty/raw/master/scripts/copyparty-android.sh > copyparty-android.sh && chmod 755 copyparty-android.sh && ./copyparty-android.sh -h

|

||||

apt update && apt -y full-upgrade && termux-setup-storage && apt -y install python && python -m ensurepip && python -m pip install -U copyparty

|

||||

echo $?

|

||||

```

|

||||

|

||||

after the initial setup (and restarting bash), you can launch copyparty at any time by running "copyparty" in Termux

|

||||

after the initial setup, you can launch copyparty at any time by running `copyparty` anywhere in Termux

|

||||

|

||||

|

||||

# dev env setup

|

||||

|

||||

```sh

|

||||

python3 -m venv .env

|

||||

. .env/bin/activate

|

||||

python3 -m venv .venv

|

||||

. .venv/bin/activate

|

||||

pip install jinja2 # mandatory deps

|

||||

pip install Pillow # thumbnail deps

|

||||

pip install black bandit pylint flake8 # vscode tooling

|

||||

@@ -74,18 +540,35 @@ pip install black bandit pylint flake8 # vscode tooling

|

||||

in the `scripts` folder:

|

||||

|

||||

* run `make -C deps-docker` to build all dependencies

|

||||

* `git tag v1.2.3 && git push origin --tags`

|

||||

* create github release with `make-tgz-release.sh`

|

||||

* upload to pypi with `make-pypi-release.(sh|bat)`

|

||||

* create sfx with `make-sfx.sh`

|

||||

|

||||

|

||||

# todo

|

||||

|

||||

roughly sorted by priority

|

||||

|

||||

* look into android thumbnail cache file format

|

||||

* support pillow-simd

|

||||

* readme.md as epilogue

|

||||

* single sha512 across all up2k chunks? maybe

|

||||

* reduce up2k roundtrips

|

||||

* start from a chunk index and just go

|

||||

* terminate client on bad data

|

||||

|

||||

discarded ideas

|

||||

|

||||

* separate sqlite table per tag

|

||||

* performance fixed by skipping some indexes (`+mt.k`)

|

||||

* audio fingerprinting

|

||||

* only makes sense if there can be a wasm client and that doesn't exist yet (except for olaf which is agpl hence counts as not existing)

|

||||

* `os.copy_file_range` for up2k cloning

|

||||

* almost never hit this path anyways

|

||||

* up2k partials ui

|

||||

* feels like there isn't much point

|

||||

* cache sha512 chunks on client

|

||||

* symlink existing files on upload

|

||||

* too dangerous

|

||||

* comment field

|

||||

* figure out the deal with pixel3a not being connectable as hotspot

|

||||

* pixel3a having unpredictable 3sec latency in general :||||

|

||||

* nah

|

||||

* look into android thumbnail cache file format

|

||||

* absolutely not

|

||||

|

||||

62

bin/README.md

Normal file

62

bin/README.md

Normal file

@@ -0,0 +1,62 @@

|

||||

# [`copyparty-fuse.py`](copyparty-fuse.py)

|

||||

* mount a copyparty server as a local filesystem (read-only)

|

||||

* **supports Windows!** -- expect `194 MiB/s` sequential read

|

||||

* **supports Linux** -- expect `117 MiB/s` sequential read

|

||||

* **supports macos** -- expect `85 MiB/s` sequential read

|

||||

|

||||

filecache is default-on for windows and macos;

|

||||

* macos readsize is 64kB, so speed ~32 MiB/s without the cache

|

||||

* windows readsize varies by software; explorer=1M, pv=32k

|

||||

|

||||

note that copyparty should run with `-ed` to enable dotfiles (hidden otherwise)

|

||||

|

||||

also consider using [../docs/rclone.md](../docs/rclone.md) instead for 5x performance

|

||||

|

||||

|

||||

## to run this on windows:

|

||||

* install [winfsp](https://github.com/billziss-gh/winfsp/releases/latest) and [python 3](https://www.python.org/downloads/)

|

||||

* [x] add python 3.x to PATH (it asks during install)

|

||||

* `python -m pip install --user fusepy`

|

||||

* `python ./copyparty-fuse.py n: http://192.168.1.69:3923/`

|

||||

|

||||

10% faster in [msys2](https://www.msys2.org/), 700% faster if debug prints are enabled:

|

||||

* `pacman -S mingw64/mingw-w64-x86_64-python{,-pip}`

|

||||

* `/mingw64/bin/python3 -m pip install --user fusepy`

|

||||

* `/mingw64/bin/python3 ./copyparty-fuse.py [...]`

|

||||

|

||||

you could replace winfsp with [dokan](https://github.com/dokan-dev/dokany/releases/latest), let me know if you [figure out how](https://github.com/dokan-dev/dokany/wiki/FUSE)

|

||||

(winfsp's sshfs leaks, doesn't look like winfsp itself does, should be fine)

|

||||

|

||||

|

||||

|

||||

# [`copyparty-fuse🅱️.py`](copyparty-fuseb.py)

|

||||

* mount a copyparty server as a local filesystem (read-only)

|

||||

* does the same thing except more correct, `samba` approves

|

||||

* **supports Linux** -- expect `18 MiB/s` (wait what)

|

||||

* **supports Macos** -- probably

|

||||

|

||||

|

||||

|

||||

# [`copyparty-fuse-streaming.py`](copyparty-fuse-streaming.py)

|

||||

* pretend this doesn't exist

|

||||

|

||||

|

||||

|

||||

# [`mtag/`](mtag/)

|

||||

* standalone programs which perform misc. file analysis

|

||||

* copyparty can Popen programs like these during file indexing to collect additional metadata

|

||||

|

||||

|

||||

# [`dbtool.py`](dbtool.py)

|

||||

upgrade utility which can show db info and help transfer data between databases, for example when a new version of copyparty recommends to wipe the DB and reindex because it now collects additional metadata during analysis, but you have some really expensive `-mtp` parsers and want to copy over the tags from the old db

|

||||

|

||||

for that example (upgrading to v0.11.0), first move the old db aside, launch copyparty, let it rebuild the db until the point where it starts running mtp (colored messages as it adds the mtp tags), then CTRL-C and patch in the old mtp tags from the old db instead

|

||||

|

||||

so assuming you have `-mtp` parsers to provide the tags `key` and `.bpm`:

|

||||

|

||||

```

|

||||

~/bin/dbtool.py -ls up2k.db

|

||||

~/bin/dbtool.py -src up2k.db.v0.10.22 up2k.db -cmp

|

||||

~/bin/dbtool.py -src up2k.db.v0.10.22 up2k.db -rm-mtp-flag -copy key

|

||||

~/bin/dbtool.py -src up2k.db.v0.10.22 up2k.db -rm-mtp-flag -copy .bpm -vac

|

||||

```

|

||||

1100

bin/copyparty-fuse-streaming.py

Executable file

1100

bin/copyparty-fuse-streaming.py

Executable file

File diff suppressed because it is too large

Load Diff

File diff suppressed because it is too large

Load Diff

592

bin/copyparty-fuseb.py

Executable file

592

bin/copyparty-fuseb.py

Executable file

@@ -0,0 +1,592 @@

|

||||

#!/usr/bin/env python3

|

||||

from __future__ import print_function, unicode_literals

|

||||

|

||||

"""copyparty-fuseb: remote copyparty as a local filesystem"""

|

||||

__author__ = "ed <copyparty@ocv.me>"

|

||||

__copyright__ = 2020

|

||||

__license__ = "MIT"

|

||||

__url__ = "https://github.com/9001/copyparty/"

|

||||

|

||||

import re

|

||||

import os

|

||||

import sys

|

||||

import time

|

||||

import stat

|

||||

import errno

|

||||

import struct

|

||||

import threading

|

||||

import http.client # py2: httplib

|

||||

import urllib.parse

|

||||

from datetime import datetime

|

||||

from urllib.parse import quote_from_bytes as quote

|

||||

|

||||

try:

|

||||

import fuse

|

||||

from fuse import Fuse

|

||||

|